Why Most AI Data Protection Strategies Fail at the Decision Layer

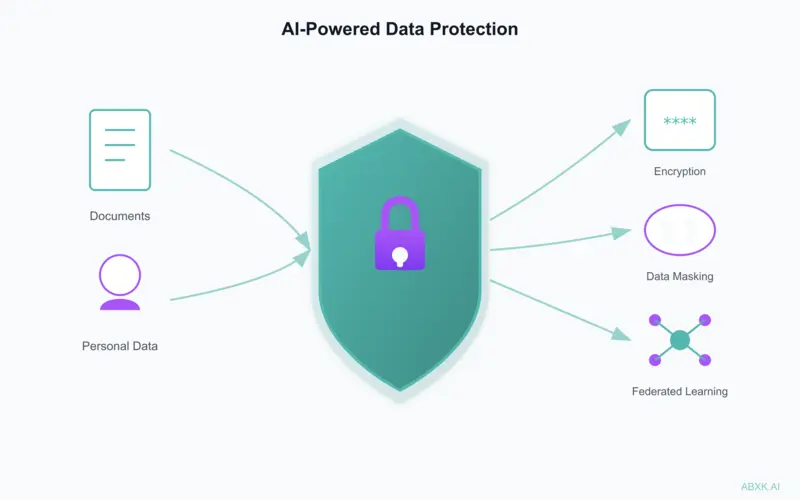

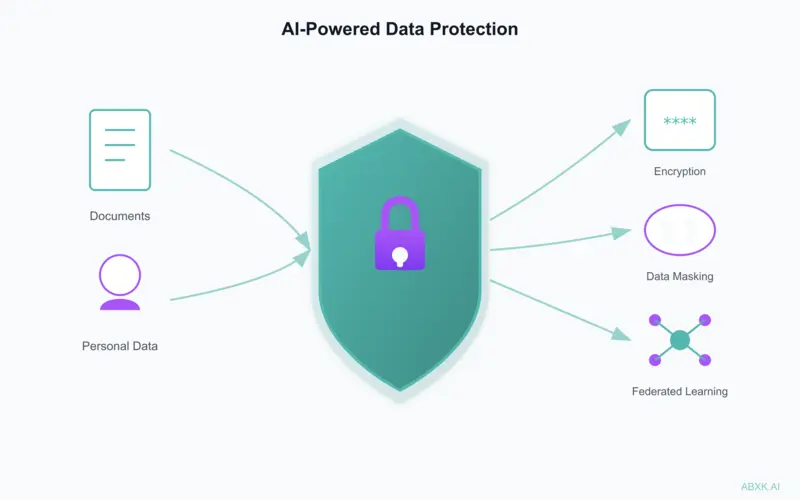

Most AI data protection fails not at the technical layer, but at the decision layer. This briefing examines how undefined authority, weak constraints, and …

ABXK.AI is a specialized AI governance and security firm focused on applied systems in production.

We examine decision structure, operational boundaries, and accountability in real-world deployments.

Technical capability is accelerating. Governance often is not.

ABXK.AI provides structured frameworks to help organizations:

Define how decisions flow through AI systems.

Clarify who approves, who escalates, who stops.

Establish where AI ends and human oversight begins.

Reduce operational and organizational risk exposure.

The goal is not to limit AI, but to ensure it remains controlled as it scales.

AI systems amplify decisions. When governance is unclear or boundaries are poorly defined:

Responsibility diffuses across teams and systems.

Systems expand beyond original intent.

Evaluation criteria erode under pressure.

Rollback and control become difficult.

In production environments, structure must be deliberate.

Disciplined implementation prevents long-term exposure.

Structured digital doctrines for applied AI systems.

Applied AI Governance Doctrine

A structured framework for governing AI systems in operational environments.

Weak governance scales risk faster than model errors.

Covers:

Designed for leaders accountable for AI initiatives in production.

Long-form digital doctrine · Executive-level framework · Immediate access

Access The AI TrapApplied AI Security Architecture

A practical framework for securing AI systems at the architectural level.

AI systems fail at their boundaries before they fail at their models.

Covers:

Designed for security teams and AI architects working with deployed systems.

Long-form digital doctrine · Practical architecture framework · Immediate access

Access AI SecurityApplied research examining how AI systems behave under operational pressure.

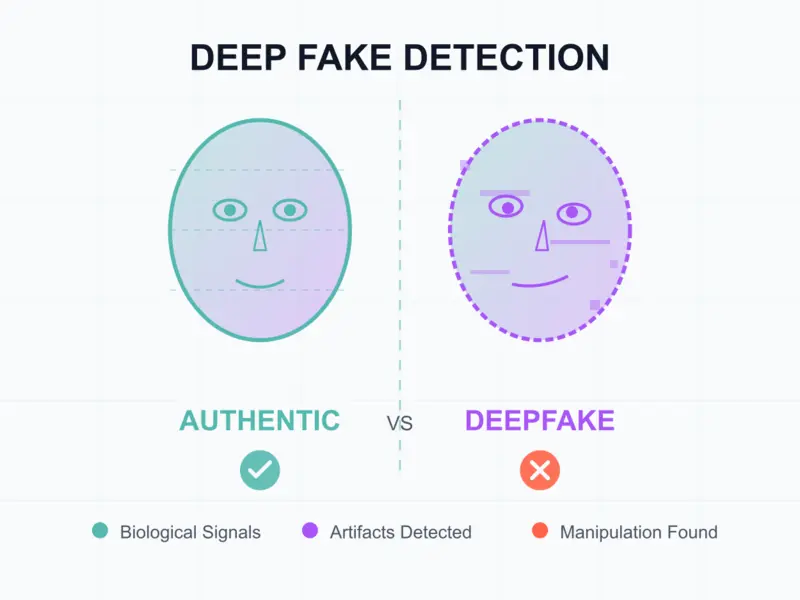

Detection systems and uncertainty patterns.

Where and why system limits fail.

Exposure patterns in scaled deployments.

Research findings inform and strengthen the masterclass frameworks.

Short analyses examining governance and security challenges in applied AI.

Each briefing addresses structural weaknesses observed in real deployments.

Most AI data protection fails not at the technical layer, but at the decision layer. This briefing examines how undefined authority, weak constraints, and …

Deepfake detection systems report high confidence, but detection reliability degrades rapidly outside original training conditions. This briefing examines why …

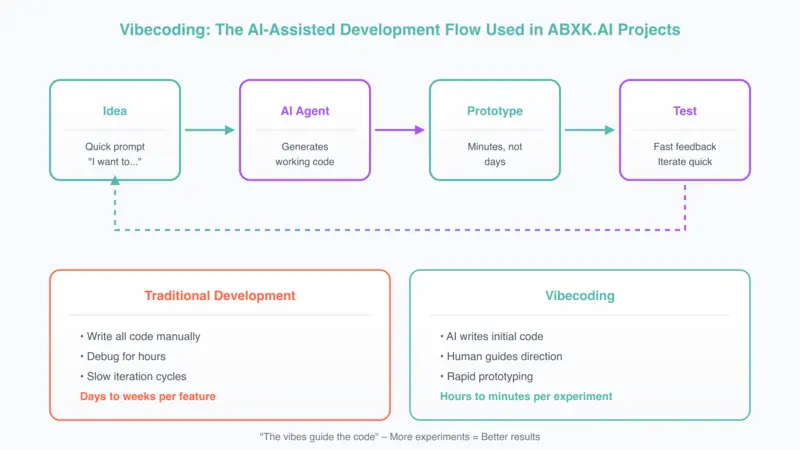

Fast iteration is not the same as good judgment. This briefing examines how experimental velocity reshapes decision quality and why acceleration without …

ABXK.AI is a specialized AI governance and security company focused on applied systems in production environments.

We study how AI systems operate within technical, organizational, and regulatory constraints.

We support disciplined AI implementation in operational settings.

AI creates leverage.

Structure ensures that leverage remains controlled.

If you are deploying AI systems in production, governance is not optional.