Vendor Defaults as Governance Failure

Organizations adopt AI services from external providers. The procurement process evaluates capability, cost, integration complexity, and vendor reputation. It rarely evaluates default configurations — the settings that define how the system handles data, what it retains, what it logs, how it processes inputs, and what governance assumptions it encodes.

These defaults are not neutral. They are governance decisions. They define data retention periods, logging granularity, model training permissions, input processing boundaries, and output handling policies. They are made by vendor engineering teams optimizing for broad market applicability, not for any specific organization’s risk posture, compliance obligations, or accountability structure.

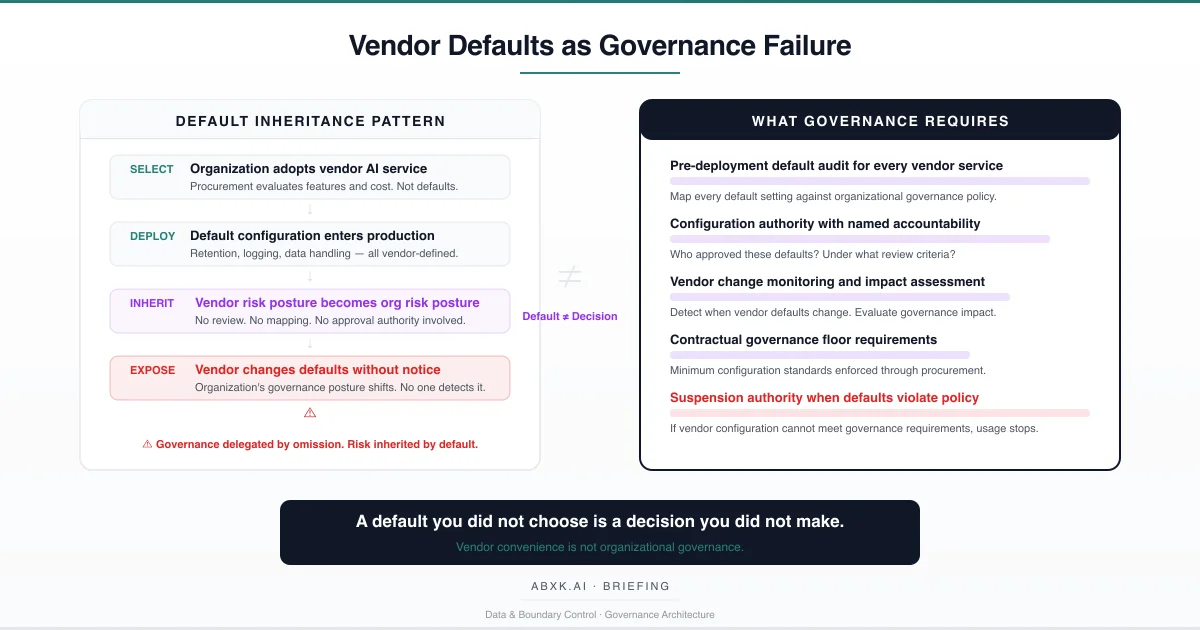

When an organization deploys a vendor AI service without auditing, evaluating, and deliberately overriding its default configuration, it does not adopt a tool. It inherits a governance posture — one designed by an entity with no accountability for the organization’s risk.

This is not a procurement oversight. It is a structural governance failure.

Vendor defaults do not fail at the technical layer. They fail at the decision layer — where configuration choices that determine data exposure, retention policy, and model behavior are made by entities outside the organization’s governance architecture.

Understanding that distinction is central to AI Risk Management, AI Security, AI Compliance, and Responsible AI implementation in production environments.

Technical Foundations: What Defaults Actually Control

Vendor AI services arrive with configurations that span the full operational surface of the system. Understanding what these defaults govern reveals why they constitute governance decisions rather than technical convenience.

Data retention defaults determine how long the vendor stores input data, output data, conversation logs, and interaction metadata. These retention periods may exceed the organization’s own data governance policies. They may conflict with data minimization requirements. They may create exposure windows that the organization has not assessed. The default operates silently. The risk accumulates continuously.

Model training defaults define whether the vendor uses customer data to improve its models. When enabled by default, organizational data — potentially including sensitive, proprietary, or regulated information — contributes to model training that benefits the vendor’s broader customer base. The organization’s data governance framework may prohibit this. The default permits it until someone explicitly disables it.

Logging and telemetry defaults determine what operational data the vendor collects about system usage. This may include query content, user behavior patterns, error frequencies, and integration metadata. The granularity of logging affects both the organization’s privacy posture and its exposure surface. Defaults optimized for vendor diagnostics may exceed what the organization would authorize under its own logging policies.

Input processing defaults govern how the system handles data before inference. Preprocessing may include tokenization, embedding, caching, or temporary storage in intermediate systems. Each processing step creates a data handling event that falls under the organization’s governance obligations. The vendor’s default processing pipeline may route data through infrastructure, jurisdictions, or processing stages that the organization has not evaluated.

Output handling defaults define what happens after the system generates a response. Outputs may be cached, logged, used for quality assurance, or retained for model evaluation. The organization treats the output as transient. The vendor’s default treats it as a persistent data asset.

These are not edge cases. These are the standard operational characteristics of externally provided AI services. Every default represents a configuration decision that affects data protection, compliance posture, and risk exposure. When the organization does not make these decisions explicitly, the vendor makes them implicitly.

The configuration appears technical. The consequence is structural.

Structural Fragility: How Defaults Degrade Governance

The structural fragility of vendor-default governance follows a predictable pattern. It begins with reasonable assumptions at procurement and degrades through operational reality.

The first assumption is default adequacy. Organizations assume that vendor defaults represent reasonable, industry-standard configurations. This assumption ignores that vendors optimize defaults for adoption friction — minimizing the number of configuration steps required to deploy — not for governance alignment. Defaults that maximize ease of deployment frequently minimize governance protections.

The second assumption is default stability. Organizations evaluate vendor configurations at procurement time and treat that evaluation as durable. Vendors update defaults through service releases, policy changes, and platform migrations. These updates may alter data handling, retention, processing, or training permissions without requiring customer acknowledgment. The configuration the organization evaluated at procurement may not be the configuration operating in production six months later.

The third assumption is default transparency. Organizations assume that vendor documentation accurately and completely describes default behavior. In practice, default behavior is distributed across multiple service layers, infrastructure components, and subprocessor relationships. The documentation describes intended behavior. The actual behavior reflects implementation details that may diverge from documentation, particularly at the boundaries between vendor systems and third-party infrastructure.

The fourth assumption is default isolation. Organizations assume that defaults affecting one aspect of the service do not propagate into other operational domains. In practice, data retention defaults interact with training defaults. Logging defaults interact with privacy defaults. Processing defaults interact with jurisdiction defaults. A change in one default category may alter risk exposure across multiple governance domains simultaneously.

The number remains. The meaning changes. The vendor decided.

Organizational Failure Patterns

Organizations do not intentionally delegate governance authority to vendors. They fail to recognize that accepting defaults constitutes delegation.

The most pervasive failure pattern is configuration neglect. The organization deploys the vendor service, configures it for functional integration — API connections, authentication, data formatting — and treats governance-relevant settings as outside the deployment scope. Data retention, training permissions, logging granularity, and processing configuration remain at vendor defaults. No one within the organization made a governance decision about these settings. The vendor’s decision stands by default.

The second failure pattern is procurement-deployment disconnect. The procurement team evaluates vendor capabilities and negotiates contract terms. The deployment team implements the service according to functional requirements. Neither team systematically maps vendor defaults against organizational governance policy. The procurement evaluation addresses contractual protections. The deployment process addresses technical integration. The gap between contract terms and actual configuration remains unexamined.

The third failure pattern is asymmetric monitoring. Organizations monitor vendor service availability, performance metrics, and functional output quality. They do not monitor vendor configuration changes, default updates, or policy modifications that affect governance posture. The vendor changes a default retention period, modifies its training data policy, or alters its logging configuration. The organization’s monitoring systems detect no anomaly because they were never designed to observe governance-relevant configuration state.

The fourth failure pattern is accountability diffusion. When a governance incident occurs — a data exposure, a compliance violation, a privacy breach — responsibility distributes between the vendor and the organization. The vendor asserts that the default configuration was documented and that the organization accepted it at deployment. The organization asserts that it relied on the vendor’s reasonable defaults. Neither party accepts structural accountability. The governance gap persists.

These are governance failures — not vendor failures. Vendors operate within their own commercial logic. Organizations operate under their own accountability obligations. When organizations accept vendor defaults without governance review, they create a structural gap that neither party is positioned to close.

AI Security Implications

Vendor defaults create security exposure through configuration states that no organizational security review has evaluated.

Default data retention creates attack surface duration. Data retained by the vendor beyond the organization’s operational need remains exposed to potential breach, unauthorized access, or legal discovery for the duration of the vendor’s retention period. The organization’s security team may have assessed risk based on its own retention policies. The vendor’s default retention extends that risk window without security review.

Default logging creates information exposure. When vendor logging captures query content, user identifiers, or interaction patterns at granularity levels exceeding organizational policy, the log data itself becomes a security-relevant asset. This data resides in the vendor’s infrastructure, subject to the vendor’s security controls, accessible to the vendor’s personnel, and vulnerable to the vendor’s breach exposure. The organization has limited visibility into — and no control over — the security of this data.

Default processing pipelines create jurisdictional exposure. Vendor AI services may route data through processing infrastructure in multiple jurisdictions. Default configurations may not restrict this routing. The organization’s data may traverse infrastructure that the organization’s security team has never assessed, in jurisdictions that the organization’s legal team has never evaluated, through subprocessors that the organization’s procurement team has never vetted.

Default model training permissions create intellectual property exposure. When vendor defaults permit using customer data for model training, organizational data — including proprietary processes, strategic information, and competitive intelligence — may be embedded into vendor models accessible to other customers. The security exposure is not data theft. It is data absorption — organizational information becoming part of a shared model with no mechanism for extraction, isolation, or deletion.

The attack surface is not the AI model. The attack surface is the configuration layer — and vendor defaults define that surface without organizational security input.

Compliance and Accountability Implications

Compliance frameworks require organizations to demonstrate that they maintain control over data processing activities. Vendor defaults directly challenge this requirement.

Accountability for data handling cannot be delegated through procurement. When an organization uses a vendor AI service, the organization retains accountability for how data is processed, stored, and used — regardless of whether the vendor or the organization configured the system. Accepting vendor defaults does not transfer compliance obligations. It creates a compliance gap where the organization is accountable for configurations it did not evaluate, did not approve, and may not understand.

Auditability requires configuration documentation. Compliance audits require the organization to demonstrate what data the system processes, how long it retains data, who has access, and what controls govern processing. When these parameters are set by vendor defaults, the organization’s audit response depends on vendor documentation that it may not have reviewed, may not have verified, and may not reflect current system behavior. The audit produces documentation of intended configuration. Not evidence of actual configuration.

Proportionality assessment requires configuration awareness. Compliance frameworks require that data processing is proportionate to the stated purpose. When vendor defaults enable broad data retention, extensive logging, and permissive processing, the organization may be processing data in ways that exceed its stated purpose. Without deliberate configuration review, proportionality cannot be demonstrated — because the organization does not know what its own system is doing.

Vendor default changes create compliance drift. When a vendor modifies its default configurations, the organization’s compliance posture changes without any organizational action. A compliance assessment conducted before the change may not reflect the system’s current behavior. The organization’s compliance documentation becomes retrospective — describing a configuration state that no longer exists.

Lineage failure transforms governance from operational accountability into documented intention — the same structural gap that appears when organizations inherit vendor configurations they did not author and cannot independently verify.

Compliance is operational enforceability — not documentation.

Production-Environment Reality

Production environments amplify vendor default risk through patterns that individually appear manageable and collectively create governance exposure that scales with vendor dependency.

Transformation chains that include vendor AI services create configuration dependencies across multiple systems. When a vendor service sits in the middle of a data pipeline, its default configuration affects not just its own behavior but the governance posture of every upstream and downstream system. A default retention period in the vendor service may conflict with the deletion policies of systems that feed it or consume its output. The conflict exists. No configuration review identified it.

Hybrid workflows that combine vendor AI services with internal systems create governance boundary ambiguity. Data moves between organizationally controlled environments and vendor-controlled environments. The governance policies differ. The default configurations differ. The accountability structures differ. Without explicit configuration that aligns vendor defaults with organizational governance requirements, hybrid workflows operate under two incompatible governance regimes simultaneously.

Model versioning in vendor services introduces temporal configuration risk. When a vendor updates its model, the new version may operate under different default parameters — different processing logic, different data handling, different output characteristics. The organization’s governance review evaluated the previous version. The new version arrives with its own defaults. The organization may not receive notification that governance-relevant defaults have changed with the model update.

Monitoring obligations require vendor configuration visibility. Organizations that monitor their AI systems for performance, drift, and compliance must extend that monitoring to vendor configuration state. Without configuration monitoring, the organization cannot detect when its governance posture has changed due to vendor actions. Monitoring that excludes vendor configuration produces a partial governance picture that may not reflect operational reality.

Integration pressure accelerates default acceptance. When business timelines demand rapid deployment, configuration review is compressed or deferred. Vendor defaults are accepted because overriding them requires additional engineering effort, additional governance review, and additional deployment time. Each deferral creates a governance gap. Deferred reviews are rarely completed retroactively.

Vendor policy evolution operates on vendor timelines, not organizational governance timelines. Vendors update their terms of service, privacy policies, and data processing agreements on their own schedule. These updates may modify the meaning of existing defaults — the configuration setting remains the same, but the vendor’s interpretation of what that setting permits has changed. The configuration persists. The governance meaning shifts.

A vendor service that cannot be reconfigured or terminated without operational disruption is not a dependency. It is infrastructure without governance control.

Governance Architecture for Vendor Default Management

Vendor default governance requires systematic evaluation, configuration authority, and continuous monitoring — not one-time procurement review.

Pre-deployment default audit. Every vendor AI service must undergo a comprehensive default configuration audit before production deployment. This audit maps every governance-relevant default — data retention, logging, training permissions, processing pipeline, jurisdictional routing, output handling — against organizational governance policy. Deployment proceeds only after defaults are either confirmed as aligned or explicitly overridden.

Named configuration authority. Every vendor default override must be approved by a named individual with governance accountability. Configuration decisions must be documented with the rationale for acceptance or override. No default enters production without documented approval from someone accountable for its governance implications.

Vendor change monitoring. Organizations must maintain continuous monitoring of vendor configuration state. When vendors modify defaults, update policies, or alter processing behavior, the monitoring system must detect the change, assess its governance impact, and trigger review. Vendor changes that affect governance posture require re-evaluation before continued operation under the new configuration.

Contractual governance floor. Procurement contracts must establish minimum governance configuration requirements — maximum retention periods, training data exclusions, logging limitations, jurisdictional constraints, and notification obligations for default changes. Vendors that cannot contractually guarantee these minimums present structural governance risk that must be assessed before adoption.

Configuration drift detection. Automated systems must periodically verify that deployed vendor configurations match approved configurations. Configuration drift — whether caused by vendor updates, deployment errors, or undocumented changes — triggers review and remediation. Drift is not tolerated as operational noise. It is treated as a governance event.

Suspension authority for governance violations. When vendor defaults cannot be configured to meet organizational governance requirements, or when vendor changes create unresolvable governance conflicts, defined authority must exist to suspend vendor service usage until alignment is restored. Continued use of a vendor service that violates governance policy is continued acceptance of unmanaged risk.

This framework does not eliminate vendor risk. It prevents vendor risk from becoming institutional failure.

Doctrine Closing

Vendor default governance is not a procurement problem. It is a governance architecture problem.

A default you did not choose is a decision you did not make. A configuration you did not audit is a risk posture you did not approve. A vendor change you did not detect is a governance shift you did not authorize.

Governance does not make vendor relationships unnecessary. It makes vendor dependencies survivable — by ensuring that every configuration decision that affects organizational risk is made by the organization, not inherited by omission.

Organizations that accept vendor defaults without governance review do not adopt AI tools. They adopt vendor risk postures — and call it deployment.