What Text Detection Confidence Actually Means

Detection systems frequently present confidence scores as precise percentages. The number appears definitive. The underlying reliability is often conditional.

This briefing examines what detection confidence actually represents — and where it becomes structurally fragile in production environments.

Detection Is Not Classification

Most explanations describe detection as pattern-matching:

“AI text has patterns. We measure them.”

This is incomplete.

Detection systems are statistical estimators operating under distribution uncertainty and evolving model ecosystems. Confidence represents probability conditioned on assumptions about:

- Training data distribution

- Model calibration

- Linguistic baselines

- Adversarial behavior

When those assumptions shift, confidence drifts.

Structural Signals vs. Surface Indicators

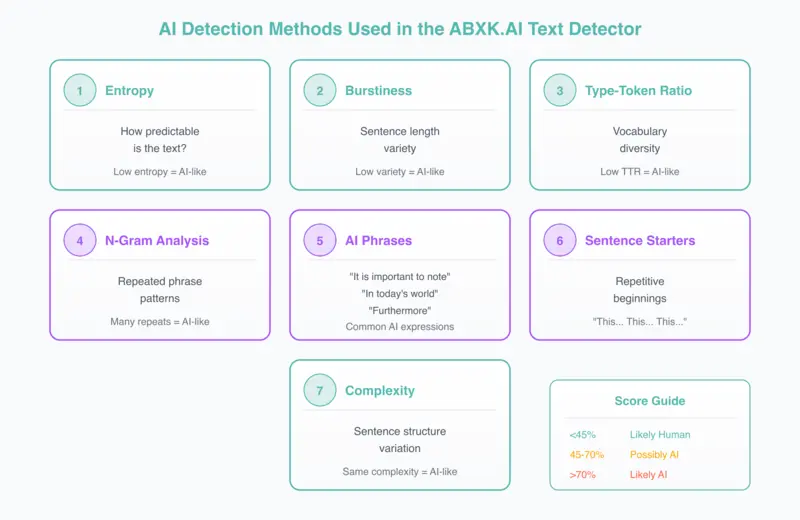

Common detection features include:

- Entropy patterns

- Sentence-length variance

- Phrase repetition

- Vocabulary distribution

- Model-specific artifact signals

Individually, these signals are weak. In combination, they produce probabilistic inference.

A 78% score does not mean "This text is AI." It means "Under current calibration, the model estimates elevated AI likelihood."

That distinction matters in governance contexts.

The Confidence Illusion

Detection systems often appear stable in controlled testing. Under operational pressure, three structural weaknesses emerge:

Calibration Drift

Confidence behavior shifts when models evolve or prompts change. A system calibrated against GPT-3.5 may produce unreliable scores against GPT-4o or Claude 3.

Distribution Shift

Academic, technical, or non-native writing may resemble AI patterns. The detector responds to statistical similarity, not actual origin.

Human-AI Hybridization

Edited AI content reduces detectable signals while preserving structure. Light editing can suppress artifact patterns without changing content.

The system still outputs a percentage. The structural meaning of that percentage changes.

Where Governance Fails

The primary failure is not detection error. It is governance misinterpretation.

Detection systems are often deployed before governance frameworks are defined. Common structural mistakes:

- Using confidence thresholds as enforcement triggers

- Treating probabilistic output as disciplinary evidence

- Ignoring model calibration updates

- Deploying detection without boundary definition

Detection systems require defined governance architecture. Without structure, they introduce organizational risk.

Detection as Reliability Study

At ABXK.AI, detection research examines:

- Cross-model variance

- Calibration stability

- False certainty patterns

- Confidence behavior under distribution shift

The objective is not binary classification performance. It is understanding reliability limits.

Detection systems should inform decisions.

They should not replace them.

Operational Implications

If deploying detection systems in production environments:

- Define acceptable false-positive tolerance — What threshold triggers review vs. action?

- Establish review and escalation paths — Who decides when confidence is ambiguous?

- Monitor calibration drift — How often is the detection model updated?

- Separate investigative signals from enforcement decisions — Detection output is evidence, not verdict.

Detection confidence is not a verdict. It is a statistical signal operating inside assumptions.

When assumptions change, confidence meaning changes.

Governance determines whether that shift becomes controlled risk — or unmanaged exposure.

Related: Why Deepfake Detection Confidence Is Structurally Fragile · The Cost Illusion in Applied AI Systems