Structural Risk in Multi-Agent AI Systems

Multi-agent AI systems represent an architectural shift in how organizations deploy automated decision-making. Instead of a single model producing a single output, multiple autonomous agents coordinate to accomplish complex tasks — each agent specializing in a function, delegating to other agents, and contributing to an outcome that emerges from their collective interaction.

The structural appeal is clear. Complex problems decompose into specialized subtasks. Each agent handles its domain. The orchestration layer coordinates their work. The system achieves capabilities that no single model could produce alone.

The structural risk is equally clear, and far less examined.

In a single-model system, governance has a defined target. One model produces one output. Accountability traces to the model, its training data, its deployment authority, and its operational oversight. The governance surface is bounded.

In a multi-agent system, governance has no single target. Multiple agents produce multiple intermediate outputs. These outputs interact, modify each other, and combine into a final result that no individual agent authored. Accountability does not trace to any single agent because no single agent produced the outcome. The governance surface is unbounded — distributed across agents, orchestration logic, delegation chains, and emergent interaction patterns.

This is not a coordination challenge. It is a governance architecture failure waiting to occur.

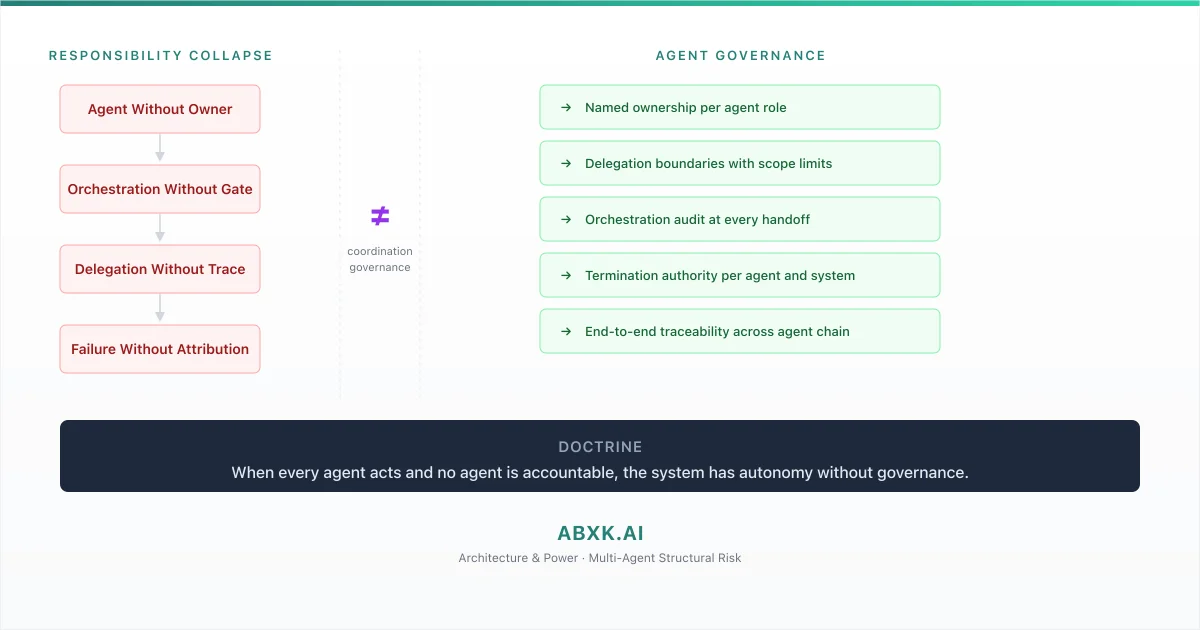

When every agent acts and no agent is accountable, the system has autonomy without governance. AI Governance exists to prevent that condition — to ensure that distributed decision-making remains attributable, reviewable, and reversible. Without multi-agent governance architecture, AI Risk Management, AI Security, and AI Compliance operate on components while the system operates without structural control.

Responsibility fragmentation in multi-agent AI does not fail at the agent layer. It fails at the architecture layer — where no one defined who owns the interactions between agents.

Technical Foundations: How Multi-Agent Architectures Create Ungovernable Surfaces

Multi-agent AI systems operate through interaction patterns that distribute decision logic across autonomous components in ways that fundamentally differ from single-model architectures. Understanding these patterns reveals why governance must be architecturally redesigned, not merely extended.

Agent autonomy is the foundational design principle. Each agent in a multi-agent system operates with a degree of independence — interpreting its inputs, selecting its actions, and producing outputs based on its own objectives and constraints. This autonomy is what makes multi-agent systems capable. It is also what makes them structurally ungovernable without explicit architecture.

Orchestration introduces a coordination layer that is itself a decision-maker. The orchestrator determines which agents are invoked, in what sequence, with what inputs, and under what conditions. These are governance-relevant decisions. The orchestrator decides what the system does. Yet in most implementations, the orchestrator is treated as infrastructure — a routing mechanism rather than a decision authority. Its decisions are not governed. Its logic is not audited. Its authority is not defined.

Delegation chains create accountability gaps that deepen with each handoff. When Agent A delegates a subtask to Agent B, and Agent B further delegates to Agent C, the chain of responsibility extends through components that may have been designed independently, trained on different data, and operated under different governance assumptions. The delegating agent does not monitor the delegated agent’s execution. The delegation is fire-and-forget. The outcome returns without provenance — the delegating agent receives a result but not the reasoning, confidence, or constraints that produced it.

Context sharing between agents introduces information flow that governance frameworks cannot track without explicit design. When agents share context — passing intermediate results, environmental state, or accumulated knowledge — the information that influences each agent’s decisions includes contributions from multiple other agents. The decision boundary of each agent is not defined solely by its own model. It is shaped by the context it received from agents whose behavior it does not control and whose outputs it cannot verify.

Emergent behavior is the defining risk characteristic of multi-agent systems. The system’s aggregate behavior is not the sum of individual agent behaviors. It is an emergent property of their interactions — shaped by delegation patterns, context sharing, temporal sequencing, and feedback loops that no single agent designed and no governance framework specified. The system does things that no individual agent was programmed to do. These emergent behaviors may be beneficial. They may also be harmful. Without flow-level governance, the organization cannot distinguish between the two until operational impact occurs.

Stochastic coordination compounds the governance challenge. When agents make probabilistic decisions about how to interact — choosing delegation targets, selecting context to share, determining action sequences — the system’s behavior becomes non-deterministic. The same inputs may produce different outcomes depending on the coordination sequence. Reproducibility, a foundational requirement for audit and accountability, is structurally compromised.

The technical pattern is consistent: multi-agent architectures distribute decision logic across autonomous components whose interactions produce outcomes that cannot be attributed, reproduced, or governed at the component level.

Structural Fragility: Where Multi-Agent Governance Assumptions Fail

Multi-agent systems are designed under assumptions that hold in controlled environments and degrade under production conditions in ways that are structurally invisible without system-level monitoring.

The first assumption is agent reliability. System architects assume that each agent will produce outputs within its specified range. In production, individual agents experience calibration drift, distribution shift, and performance degradation — the same dynamics that affect single models, but now distributed across multiple components. When one agent in a multi-agent chain degrades, the degradation propagates through delegation and context sharing to agents that are themselves performing within specification. The system degrades. No individual agent signals the degradation.

The second assumption is orchestration neutrality. Architects treat the orchestration layer as a passive coordinator — routing inputs and aggregating outputs without making consequential decisions. In practice, the orchestrator makes decisions that shape the system’s behavior: which agents to invoke, how to decompose tasks, when to aggregate results, and how to handle conflicts between agent outputs. These are governance-relevant decisions made by a component that is rarely governed.

The third assumption is delegation fidelity. When an agent delegates a subtask, the implicit assumption is that the delegated agent will interpret the task consistently with the delegating agent’s intent. In multi-agent systems, agents interpret tasks through their own training, context, and objectives. The delegated agent’s interpretation may diverge from the delegating agent’s intent. The divergence is invisible to the delegating agent because it receives only the result, not the interpretation process.

The fourth assumption is bounded interaction. System architects assume that agent interactions follow defined patterns — predictable delegation chains, consistent context sharing, stable coordination sequences. In production, agent interactions evolve as the system processes varied inputs. New interaction patterns emerge that were not anticipated at design time. The system behaves in ways that no architect specified because the behavior emerged from interactions that no architect modeled.

The agents remain. The governance assumptions about their interactions erode. The system operates beyond its designed governance surface without any component signaling that the boundary has been crossed.

Organizational Failure Patterns

Organizations deploy multi-agent AI systems using governance frameworks designed for single-model architectures — and discover that those frameworks do not map to distributed autonomous systems.

The most pervasive failure pattern is agent-level governance applied to system-level risk. Each agent has a model card, a risk assessment, and a monitoring framework. No governance structure addresses the interactions between agents. The retrieval agent is governed. The reasoning agent is governed. The action agent is governed. The delegation chain that connects them — where the retrieval agent’s output shapes the reasoning agent’s context, which determines the action agent’s behavior — is ungoverned. This is where system-level risk originates.

The second failure pattern is orchestrator invisibility. The orchestration layer makes consequential decisions about task decomposition, agent selection, and result aggregation. These decisions are not logged as governance events. They are not reviewed by decision authorities. They are not subject to escalation triggers. The orchestrator operates as a decision-maker with the governance profile of infrastructure. Its decisions shape every outcome the system produces. No governance framework evaluates those decisions.

The third failure pattern is delegation without provenance. When agents delegate tasks through multi-level chains, the provenance of intermediate results is lost. The final output of the system includes contributions from multiple agents, but the contribution of each agent — what it received, how it interpreted it, what it produced, and why — is not traceable. When the system produces a harmful outcome, post-incident analysis cannot reconstruct which agent’s contribution caused the harm because the delegation chain was not instrumented for traceability.

The fourth failure pattern is accountability by exclusion. When a multi-agent system fails, organizations attempt to identify the responsible agent. Each agent demonstrates that it operated within its specification. The harmful outcome is attributed to their interaction. But no one owns the interaction. No team is responsible for the emergent behavior of the system. Accountability is assigned to no one — not because anyone is deliberately evading responsibility, but because the governance architecture does not define system-level accountability.

The fifth failure pattern is scope creep through capability composition. As organizations add agents to existing multi-agent systems, the system’s capability scope expands. Each new agent is individually assessed. The expanded interaction surface — the new delegation paths, context sharing patterns, and emergent behaviors that the new agent enables — is not assessed. The system’s governance scope remains static while its operational scope grows.

These are governance architecture failures. The agents work. The interactions are ungoverned. The failure is structural.

AI Security Implications

Multi-agent architectures create security exposure that is qualitatively different from single-model vulnerability — distributed across interaction surfaces that component-level security cannot protect.

Agent impersonation and injection attacks exploit the trust relationships between agents. In multi-agent systems, agents accept inputs from other agents based on implicit trust — the assumption that inter-agent communication is legitimate. An adversary who can inject content into the context shared between agents can manipulate downstream agent behavior without directly attacking any agent’s model. The attack surface is the communication channel, not the agent itself.

Delegation chain exploitation allows adversaries to achieve indirect control over agents they cannot directly access. By manipulating the input to an upstream agent, an adversary can influence the delegation chain — causing the upstream agent to delegate tasks to downstream agents in ways that produce adversary-controlled outcomes. The attack follows the delegation path. Each agent executes legitimately. The aggregate behavior serves the adversary’s objective.

Orchestrator compromise represents a single point of systemic failure. If an adversary can influence the orchestrator’s decision logic — its task decomposition, agent selection, or result aggregation — they can control the system’s behavior without compromising any individual agent. The orchestrator’s infrastructure status means it often receives less security scrutiny than individual agents, despite having more operational influence.

Emergent vulnerability — attack surfaces that exist only in the interaction between agents, not in any individual agent — represents a category of security risk that single-model security frameworks cannot address. A vulnerability may arise from the combination of two individually secure agents whose interaction creates an exploitable condition. No agent-level security assessment would detect it because the vulnerability does not exist at the agent level.

Where decision flow architecture lacks stop authority, as examined in the preceding briefing, multi-agent systems amplify that absence. A compromised agent in a multi-agent system can propagate its influence through delegation chains at computational speed. Without system-level stop authority, the compromise propagates through the full agent chain before any security response is structurally possible.

Compliance and Accountability Implications

Compliance frameworks require organizations to demonstrate accountability for automated decisions. Multi-agent systems distribute decisions across agents in ways that make accountability attribution structurally impossible without system-level governance design.

Auditability in multi-agent systems requires end-to-end traceability that captures not only each agent’s inputs and outputs but the delegation decisions, context transfers, and orchestration logic that connected them. An audit that examines individual agents can verify that each agent operated correctly. It cannot verify that the system operated correctly because the system’s behavior is an emergent property of agent interactions that no agent-level audit captures.

Documentation requirements extend beyond agent documentation to system-level interaction documentation. Each agent’s model card documents that agent’s capabilities and limitations. The multi-agent system’s interaction patterns, delegation boundaries, context sharing protocols, and emergent behavior characteristics must be documented as system-level governance artifacts. The system is more than its agents. Its documentation must reflect that.

Proportionality assessment in multi-agent systems requires evaluating the cumulative impact of agent interactions, not just individual agent outputs. An action taken by a final agent in a delegation chain may be disproportionate — not because that agent’s decision was disproportionate in isolation, but because the chain of upstream decisions that led to it accumulated bias, amplified uncertainty, or shifted context in ways that made the final action inappropriate. Proportionality is a system property.

Accountability assignment requires named authority at the system level. When a multi-agent system produces a harmful outcome, compliance frameworks must identify who held authority over the system — not over individual agents, but over the orchestration logic, the delegation boundaries, the interaction patterns, and the emergent behavior that produced the outcome. Without system-level authority, accountability fragments across agent owners who can each demonstrate individual compliance while the system operates without structural accountability.

Compliance is operational enforceability — not agent-level documentation. Enforceability in multi-agent systems requires governance architecture that operates at the system level.

Production-Environment Reality

Production environments expose multi-agent governance failures through operational patterns that intensify as systems scale, integrate, and evolve.

Agent versioning across multi-agent systems creates compatibility challenges that mirror but exceed single-model versioning. When one agent is updated, its output characteristics may change. Other agents that consume its outputs — trained or calibrated against the previous version — now operate on inputs from a different distribution. The system’s behavior changes without any agent failing. No component-level monitoring detects the incompatibility because each agent is individually valid.

Dynamic agent composition — systems that add or remove agents based on task requirements — creates governance surfaces that change at runtime. The system’s governance scope is not fixed at deployment. It evolves with each task. An agent invoked for one task may not be invoked for the next. The governance framework must accommodate variable composition without sacrificing accountability.

Cross-organizational agent chains — where agents from different vendors or internal teams interact in a single system — create governance boundaries that no single organization controls. The organization governs its own agents. It does not govern vendor agents that participate in the same multi-agent system. The interaction between governed and ungoverned agents creates accountability gaps at the organizational boundary.

Monitoring in multi-agent systems requires system-level observability — metrics that capture interaction patterns, delegation depth, context drift, and emergent behavior indicators. Agent-level monitoring that tracks individual performance cannot detect system-level degradation. The agents are healthy. The system is drifting. The monitoring gap is architectural.

Feedback loops in multi-agent systems — where one agent’s output influences the context or behavior of agents that subsequently influence the first agent — create self-reinforcing patterns that may amplify errors or biases. Without system-level feedback detection, these loops operate undetected until their cumulative effect produces visible operational impact.

Resource competition between agents introduces non-deterministic behavior based on operational conditions. When agents share computational resources, their execution timing and output quality may vary with system load. The system’s behavior under production load may differ from its behavior under testing conditions. Governance frameworks validated under test conditions may not hold under production conditions.

Governance Architecture for Multi-Agent Systems

Multi-agent governance requires architectural design at the system level — not agent-level governance aggregation.

Named ownership per agent role. Every agent in a multi-agent system must have a named owner responsible for that agent’s behavior, including its interactions with other agents. Ownership includes accountability for the agent’s contribution to system-level outcomes.

Delegation boundaries with scope limits. Every delegation relationship between agents must have defined scope — what tasks may be delegated, what context may be shared, what actions the delegated agent may take. Unbounded delegation creates unbounded accountability gaps.

Orchestration governance. The orchestration layer must be governed as a decision-maker. Its task decomposition logic, agent selection criteria, and result aggregation rules must be documented, audited, and subject to decision authority. The orchestrator is not infrastructure. It is the system’s primary decision authority.

Interaction traceability. Every inter-agent communication — delegation, context sharing, result return — must be logged with sufficient detail to reconstruct the full interaction chain. Traceability that captures agent-level events but not inter-agent events cannot support system-level audit.

System-level escalation architecture. Escalation triggers must operate at the system level, monitoring cross-agent patterns — delegation depth, cumulative uncertainty, emergent behavior indicators — that no single agent can detect. Escalation must include the authority to halt the system, not just individual agents.

Termination authority per agent and system. Named authority must exist to terminate individual agents and to terminate the multi-agent system as a whole. Agent-level termination without system-level termination authority allows the system to continue operating with degraded or compromised components.

Emergent behavior monitoring. Governance must include detection mechanisms for system behaviors that were not specified at design time. These mechanisms must distinguish between beneficial emergence and harmful emergence — and trigger governance review when the distinction is uncertain.

Composition governance. Adding or removing agents from a multi-agent system must be treated as a governance decision — requiring evaluation of the new agent’s impact on existing interaction patterns, delegation chains, and emergent behavior characteristics.

This framework does not eliminate responsibility fragmentation. It prevents responsibility fragmentation from becoming institutional failure.

Doctrine Closing

Multi-agent AI governance is not a coordination problem. It is a governance architecture problem.

Organizations that govern individual agents but not their interactions govern the components while the system governs itself. Each agent operates within specification. The system operates without structural accountability. When failure occurs, it emerges from interactions that no one owned because no governance architecture defined ownership.

When every agent acts and no agent is accountable, the system has autonomy without governance. Governance architecture exists to ensure that autonomy is bounded, interactions are traceable, and accountability is structurally assigned — before the system acts, not after it fails.