Speed vs Judgment in Experimental AI Systems

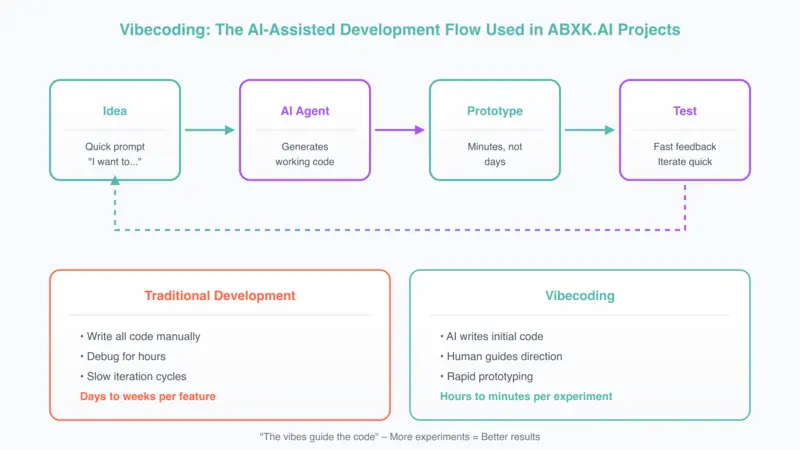

AI-assisted development tools have compressed the distance between idea and implementation. Prototypes that once required days now require hours. The acceleration is real. The risk lies in confusing speed with judgment.

This briefing examines how experimental velocity reshapes decision quality in applied AI environments.

Acceleration Changes Incentives

AI-assisted coding systems enable rapid:

- Prototype generation

- Feature experimentation

- Data pipeline construction

- API integration

The friction of implementation declines. When implementation cost decreases, experimentation frequency increases.

When failure becomes inexpensive, hypothesis quality often degrades.

The Structural Trade-Off

In experimental AI systems, two forces compete:

Speed

Reduces time to feedback. Enables more iterations. Shortens discovery cycles.

Judgment

Ensures feedback is meaningful. Validates assumptions. Maintains decision discipline.

Acceleration can strengthen research cycles. It can also produce shallow experimentation: weakly defined hypotheses, incomplete evaluation criteria, unvalidated assumptions, premature scaling of fragile ideas.

Prototype Velocity vs. Production Discipline

AI-assisted development tools are effective for:

- Exploratory modeling

- Data transformations

- Feature engineering tests

- Throwaway validation scripts

They are not substitutes for:

- Security-critical systems

- Production architectures

- Long-term maintainable code

- Reliability engineering

The failure pattern emerges when prototype velocity bypasses governance gates.

The Confidence Illusion in Experimental Work

Rapid prototyping introduces a specific risk: perceived progress without validated structure.

When AI tools generate functional code quickly:

- Teams may skip formal hypothesis articulation

- Evaluation metrics may be improvised

- Negative results may be misinterpreted

- Temporary code may migrate into production

Working code creates the impression of correctness.

Experimental AI systems amplify this bias.

Judgment Requires Pre-Defined Criteria

Acceleration is not inherently harmful. It becomes risky when decision architecture is absent.

Before experimental execution, define:

- The hypothesis being tested

- The metric that determines success

- The threshold that justifies further investment

- The stop criteria for abandonment

- The conditions for productionization

Without these definitions, speed compounds ambiguity.

Hybrid Development — With Guardrails

A disciplined approach separates phases:

- Rapid exploration under controlled conditions

- Formal reimplementation for production environments

- Security and reliability validation

- Governance review before deployment

Speed is confined to exploration. Structure governs production. The boundary must be explicit.

Organizational Risk Patterns

Common structural failures in accelerated AI development:

- Prototype code deployed without architectural review

- Insufficient testing of AI-generated logic

- Reduced internal understanding of system behavior

- Inherited technical debt masked by early performance gains

- Overconfidence in AI-assisted output

These are governance failures — not tooling failures.

The Strategic Question

The relevant question is not: “Can we build faster?”

It is: “Does faster building improve decision quality?”

If acceleration degrades evaluation discipline, it increases structural risk.

If acceleration shortens feedback while preserving governance boundaries, it strengthens research cycles.

The distinction is architectural.

Speed is leverage. Leverage without structure magnifies error.

In experimental AI systems, judgment must scale with velocity. Otherwise, acceleration becomes exposure.

Related: What Text Detection Confidence Actually Means · Why Most AI Data Protection Strategies Fail