Model Drift Is a Governance Problem

Every model deployed into a production environment begins to drift from the moment it encounters real-world data. The training distribution that validated the model at deployment does not remain stable. Input patterns evolve. Feature relevance shifts. Correlations that held during development weaken or invert under operational conditions.

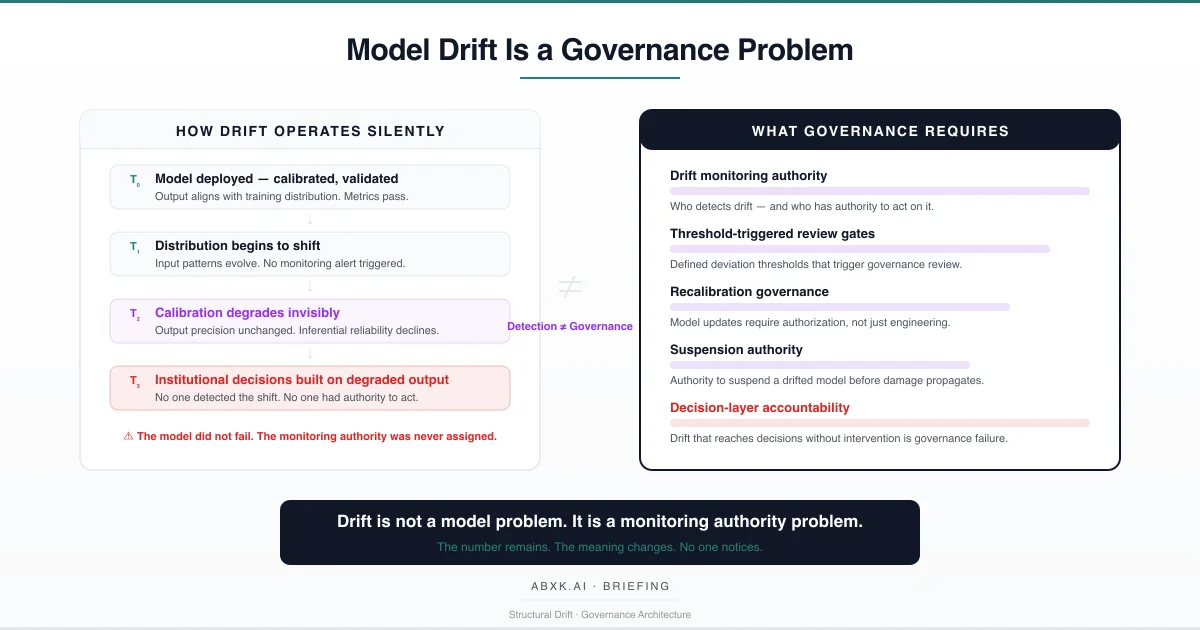

This is not a defect. It is a structural property of statistical inference systems operating in non-stationary environments. Drift is technically inevitable. What is not inevitable is governance failure — the organizational condition in which drift reaches the decision layer without detection, without review, and without anyone holding authority to act.

Model drift does not fail at the technical layer. It fails at the decision layer — where organizations deploy models without defining who monitors drift, what thresholds trigger intervention, and who has authority to suspend or recalibrate a degraded model.

AI Governance defines how production systems are monitored under accountability. Without governance architecture, drift operates silently — degrading the inferential reliability of every decision the model informs while the output continues to appear precise, consistent, and authoritative.

Understanding that distinction is central to AI Risk Management, AI Security, and Responsible AI implementation in production environments.

The Technical Reality of Drift

Model drift is not a single phenomenon. It is a category of degradation processes that operate simultaneously, at different rates, and with different visibility profiles.

Data drift occurs when the statistical distribution of input data diverges from the distribution on which the model was trained. A predictive maintenance model calibrated on sensor data from one operational period encounters readings from degraded equipment, changed environmental conditions, or updated instrumentation. The input patterns shift. The model’s learned relationships no longer correspond to the data it receives. Output continues. Reliability declines.

Concept drift occurs when the relationship between input features and target outcomes changes. A risk scoring model trained on historical failure patterns encounters new failure modes — modes that the training distribution did not represent. The model’s internal mapping between features and outcomes is no longer valid, but the output format remains unchanged. The score appears authoritative. The inference is stale.

Calibration drift occurs when the probabilistic calibration of model output degrades over time. A model that initially produced well-calibrated confidence scores — where a 0.8 confidence corresponded to 80 percent accuracy — loses that alignment as the underlying distribution shifts. The confidence value persists. The correspondence between confidence and real-world accuracy erodes. Decisions made on the basis of confidence thresholds inherit the degradation without visibility.

Feature drift occurs when the predictive relevance of individual input features changes. Features that were strongly correlated with outcomes during training may lose predictive power as operational conditions evolve. The model continues to weight those features according to its training. The output reflects historical relevance, not current validity.

These drift processes do not operate in isolation. In production environments, they compound. A model experiencing simultaneous data drift and concept drift degrades faster and less predictably than either process alone would suggest. The interaction effects are nonlinear. The visibility of degradation is minimal. The output remains numerically precise.

The number remains. The meaning changes.

Structural Fragility in Production

The structural fragility of model drift does not reside in the technical reality of distributional shift. It resides in the organizational assumption that deployed models remain valid until proven otherwise.

This assumption inverts the burden of proof. In governance terms, the question is not whether a model has drifted. The question is whether the organization can demonstrate that a model has not drifted — and if it has, whether governance mechanisms activated in response.

In production environments, models operate continuously under conditions that differ from their validation environment in ways that are difficult to enumerate in advance. Sensor configurations change. Customer behavior evolves. Operational processes are modified. Upstream data pipelines introduce formatting changes, schema updates, or value range shifts. Each of these changes has the potential to induce drift — and none of them generates an automatic alert in the model’s output.

The fragility compounds through integration depth. A model that feeds output to downstream systems — dashboards, automated workflows, reporting layers, other models — propagates drifted inference through the organizational decision chain. By the time the degraded output reaches a decision-maker, it has been aggregated, reformatted, and stripped of any indicator that the underlying model has drifted. The decision-maker sees a number. The number looks the same as it always has. The reliability behind it has changed.

This is not a monitoring gap that can be solved by adding statistical tests. Monitoring drift is a technical activity. Governing drift — defining who has authority to act on detected drift, what thresholds trigger intervention, and what happens when a model is found to have degraded — is a governance obligation. The distinction is structural.

Organizational Failure Patterns

Organizations that operate production AI systems without drift governance exhibit specific, identifiable failure patterns. These patterns are not edge cases. They are structural consequences of treating model deployment as a one-time validation event rather than a continuous accountability obligation.

Monitoring without authority. Many organizations implement drift detection tooling without assigning decision authority. Statistical tests flag distributional shift. Dashboards display drift metrics. But no governance structure defines who reviews the alerts, who decides whether the drift is material, and who has authority to suspend the model or trigger recalibration. Monitoring without authority is observation without governance. The drift is detected. Nothing happens.

Threshold absence. Organizations frequently deploy models without defining what level of drift constitutes a governance event. Is a 5 percent deviation in input distribution material? Is a 10 percent drop in calibration accuracy significant? Without predefined thresholds, every drift observation becomes an ambiguous signal — subject to interpretation, deferral, and inaction. Threshold absence converts drift monitoring into drift observation. The organizational response is discretionary rather than structural.

Recalibration without governance. When drift is eventually addressed, the response is frequently treated as a technical task — a data science team retrains the model, validates it against updated data, and redeploys it. No governance review evaluates whether the recalibrated model’s output is consistent with the decisions that depended on its predecessor. No authorization process requires sign-off from the domain owners who rely on the model’s output. Recalibration without governance is model replacement without accountability.

Drift normalization. Over time, organizations that lack drift governance develop an implicit tolerance for degradation. Teams become accustomed to declining accuracy metrics. Workarounds emerge — manual adjustments, post-hoc corrections, intuitive discounting of model output. The model remains in production. The reliance on its output continues. But the institutional trust in the model has quietly migrated from the model’s demonstrated accuracy to the team’s accumulated corrections. The governance gap is filled by informal practice rather than structural architecture.

Cascading drift. In systems where multiple models interact — where one model’s output serves as another model’s input — drift in an upstream model propagates through the model chain. Each downstream model receives drifted input and produces further-degraded output. The cascading effect multiplies the governance challenge. A single instance of unmonitored drift in an upstream model can compromise the reliability of every downstream decision that depends on the chain.

These are governance failures — not tooling failures. The models performed as deployed. The organization failed to govern their continued validity.

AI Security Implications

Model drift creates security vulnerabilities that are structurally distinct from traditional attack surfaces. The vulnerability is not in the model’s architecture. It is in the organization’s inability to distinguish between valid and degraded output.

Adversarial actors who understand drift dynamics can exploit the gap between a model’s apparent precision and its actual reliability. A drifted model is more susceptible to adversarial inputs — because the decision boundaries the model learned during training no longer align with the distribution it encounters in production. Inputs that would have been correctly classified under the original distribution may be misclassified under drift. The adversary does not need to attack the model. The adversary needs only to operate in the space that drift has opened.

Drift also creates integrity vulnerabilities at the institutional level. When an organization cannot verify that its models produce reliable output — because no drift monitoring authority exists — it cannot verify the integrity of the decisions those models inform. Audit processes that assume model output is valid inherit the assumption that deployment validation remains current. If drift has degraded the model, the audit is structurally compromised — not because the audit was poorly designed, but because the model’s reliability was never continuously verified.

The attack surface expansion is proportional to the depth of organizational reliance on model output. An organization that uses a single model for advisory purposes has limited exposure. An organization that embeds model output into automated workflows, operational standards, and institutional policy — as described in the output migration pattern — multiplies its drift-related security exposure at every integration point. Where drifted output has become operational standard, drift becomes policy degradation without authorization.

Security architecture must treat drift governance as a control requirement. A model without monitored drift status is an unverified system operating with institutional authority.

Compliance and Accountability Implications

Compliance frameworks require that automated systems operate within validated parameters. Model drift structurally undermines that requirement.

When a model drifts beyond its validated operating range, every decision it informs operates outside the compliance boundary — even if the model was fully compliant at deployment. Compliance is not a point-in-time property. It is a continuous obligation. A model that was validated six months ago and has drifted since is not compliant. It is historically validated and currently unverified.

Auditability requires that organizations demonstrate not only that a model was valid when deployed, but that it remained valid throughout its operational life. Without drift monitoring records, the organization cannot reconstruct the model’s reliability trajectory. Audit reconstruction becomes impossible — not because records were destroyed, but because the monitoring data was never generated.

Proportionality obligations require that automated decisions be proportionate to their impact. A drifted model may produce disproportionate outcomes — flagging low-risk entities as high-risk, or failing to flag genuinely risky ones — without the organization recognizing the disproportion. Proportionality assessment requires current accuracy. Drift eliminates current accuracy without visible signal.

Documentation obligations extend beyond model architecture to operational performance. Organizations must document not only how a model works, but how it continues to work — including drift trajectory, recalibration history, and intervention records. Documentation that stops at deployment is documentation of intention, not documentation of performance.

Compliance is operational enforceability — not documentation. And enforceability requires that the organization continuously verify that its models operate within the parameters under which they were validated.

Production-Environment Reality

In production environments, drift is accelerated by conditions that do not exist in development and validation settings.

Transformation chains alter input data between its source and the model’s inference layer. Data pipelines apply formatting, normalization, encoding, and aggregation transformations. When upstream pipeline components change — through updates, bug fixes, or schema modifications — the data reaching the model shifts even when the underlying source data has not. The model experiences drift induced not by the world changing, but by the infrastructure changing. This infrastructure-induced drift is particularly difficult to detect because it does not correspond to any external event.

Hybrid workflows mix human and automated decisions in ways that alter the feedback data the model receives. When human reviewers override model output, the override patterns may not flow back into drift monitoring. When they do, the monitoring system cannot distinguish between drift-induced errors and legitimate edge cases. Hybrid workflows create ambiguity in drift signals — ambiguity that degrades monitoring reliability.

Model versioning introduces temporal complexity. When a model is updated, all downstream systems that consumed the previous version’s output are now operating on a different statistical basis. Version transitions that are not governed create discontinuities in decision consistency. Output that was reliable under version N may not be comparable to output under version N+1 — but the downstream consumers treat both as equivalent.

Vendor policy evolution compounds the challenge for organizations using externally provided models. When a vendor updates its model — changes architecture, retrains on new data, adjusts output calibration — the organization’s operational systems experience drift without internal cause. The vendor’s model changed. The organization’s governance did not receive notification. The drift is externally imposed and internally invisible.

Monitoring obligations in production environments must extend beyond model performance metrics to include distributional monitoring, calibration verification, feature relevance tracking, and integration-point validation. Each of these dimensions requires not only technical instrumentation but governance authority — someone who is accountable for reviewing the signals and authorized to act when thresholds are exceeded.

Governance Architecture for Model Drift

Governing model drift requires structural controls that operate continuously throughout the model’s operational lifecycle. The following architecture components represent the structural minimum for organizations operating production AI systems.

Drift monitoring authority. Every deployed model must have an assigned monitoring authority — a named role or function accountable for detecting drift and initiating governance response. Monitoring authority includes the mandate to access drift metrics, the competence to evaluate their significance, and the authorization to trigger escalation. Monitoring without authority is instrumentation without governance.

Threshold architecture. For every deployed model, the organization must define quantitative thresholds for each drift dimension: distributional deviation, calibration degradation, feature relevance change, and output consistency shift. Thresholds must specify what governance action is triggered at each level — from review to recalibration to suspension. Undefined thresholds produce undefined responses.

Decision authority for intervention. When drift is detected and thresholds are exceeded, the governance architecture must define who has authority to intervene: who can mandate recalibration, who can suspend the model, who can authorize continued operation under degraded conditions. Decision authority must be explicit. If no one has authority to suspend a drifted model, no one governs its continued operation.

Escalation paths. When drift status is ambiguous — when metrics suggest degradation but do not clearly exceed thresholds — escalation paths must route the assessment to governance review. Ambiguity must trigger escalation, not deferral. A drift signal that is ignored because it is ambiguous is a governance gap.

Recalibration governance. Model recalibration and retraining must operate under governance authorization. Recalibration changes the statistical basis of every decision the model informs. It requires not only technical validation but governance sign-off from the domain owners and decision authorities who depend on the model’s output. Recalibration without authorization is model replacement without accountability.

Suspension and termination authority. The organization must retain the ability to suspend a drifted model without cascading operational failure. If suspending a model would disrupt critical operations, the organization has exceeded its governance capacity — it has built operational dependency without revocability. Dependency without suspension authority is structural risk.

Documentation and traceability. Drift monitoring records, threshold exceedance events, intervention decisions, recalibration authorizations, and suspension actions must be documented and traceable. The organization must be able to reconstruct the drift history of any model and demonstrate that governance mechanisms operated throughout its lifecycle.

Continuous recalibration cycles. Drift governance is not event-driven. It is continuous. Models require periodic recalibration assessment regardless of whether thresholds have been exceeded. Scheduled review cycles prevent drift from accumulating below threshold levels until it compounds into material degradation.

This framework does not eliminate drift. It prevents drift from becoming institutional failure.

Doctrine Closing

Model drift is structurally inevitable. Every model deployed into a production environment will experience distributional shift, calibration degradation, and feature instability. The technical reality is not the failure.

The failure is organizational. No monitoring authority assigned. No thresholds defined. No decision authority established. No suspension capability maintained.

Model drift is not a tooling problem. It is a governance architecture problem. Governance does not make models permanent. It makes degradation visible, accountable, and survivable.