Governance Architecture: Who Has the Right to Decide?

Every AI system in production makes decisions. It classifies inputs. It scores risks. It generates recommendations. It routes workflows. It approves or denies requests. Each output constitutes a decision — or directly shapes one.

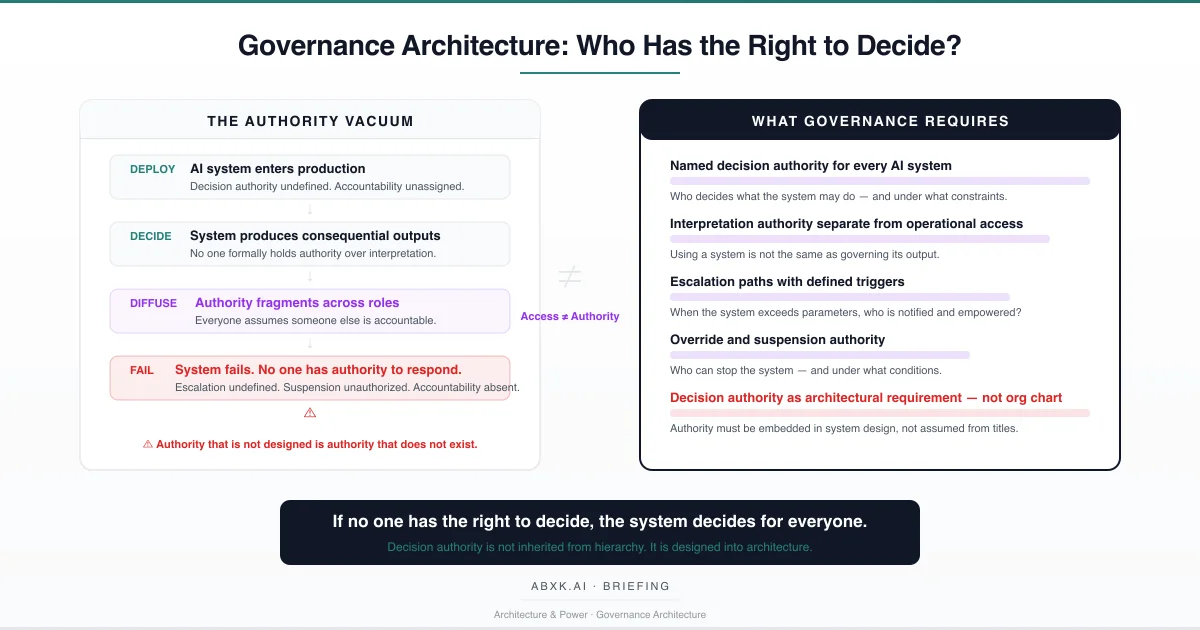

The structural question is not whether the system can make these decisions. It is who has the authority to govern them.

In most production AI environments, this question is unanswered. The system was deployed by an engineering team. It is operated by a platform team. Its outputs are consumed by business users. Its compliance implications are assessed by a legal team. Its security posture is managed by an information security team. Each team holds a piece of the system’s operational surface. No team holds decision authority over what the system is permitted to decide, how its outputs are interpreted, or who is accountable when those outputs produce harm.

This is not an organizational communication problem. It is a governance architecture failure.

Decision authority in AI systems does not emerge from organizational hierarchy. It does not transfer from the business sponsor who requested the system. It does not reside with the team that trained the model. It does not follow from access permissions or operational responsibility.

Decision authority must be designed. It must be explicitly defined, structurally embedded, and continuously maintained as the system evolves. Without designed authority, the system operates inside an authority vacuum — and in that vacuum, the system itself becomes the decision-maker.

AI Governance exists to define who decides, under what authority, within what constraints, and with what accountability. Without that architecture, AI Risk Management, AI Security, and AI Compliance operate without structural foundation.

Decision authority does not fail at the operational layer. It fails at the architecture layer — where no one defined who holds the right to decide.

Technical Foundations: Why AI Systems Create Authority Ambiguity

AI systems produce outputs through processes that distribute decision logic across computational layers in ways that defy traditional authority structures. Understanding this distribution reveals why authority must be explicitly designed rather than organizationally assumed.

Traditional decision systems operate through defined rules. A business rule engine executes logic that was authored by a known person, reviewed by a known authority, and approved through a documented process. The decision authority is embedded in the authorship chain. When the rule produces an incorrect outcome, accountability traces back through that chain.

AI systems operate differently. The decision logic is not authored. It is learned — derived from data through optimization processes that produce weight configurations reflecting statistical patterns across training distributions. No individual authored the decision boundary. No committee approved the specific threshold. The model’s decision logic emerged from data. Authority over that logic cannot be attributed to any person because no person defined it.

This creates a structural authority gap. In rule-based systems, the person who writes the rule has decision authority over it. In AI systems, the model’s decision logic has no author. The data scientists who trained the model defined the architecture and training process. They did not define the specific decision boundaries the model learned. The business stakeholders who provided requirements defined the desired outcome. They did not define how the model would achieve it. The gap between intent and implementation is filled by statistical optimization — a process that produces effective decisions but not accountable ones.

Ensemble methods compound the authority problem. When multiple models contribute to a single output through voting, averaging, or cascading logic, the decision is distributed across models that may have been trained by different teams, on different data, at different times. No single model is the decision-maker. No single team is the authority. The decision emerges from aggregation. Authority over that aggregation is structurally undefined.

Continuous learning and adaptation introduce temporal authority erosion. A model deployed with a specific decision profile evolves as it processes new data. The decision boundaries shift. The behavior that was evaluated at deployment no longer reflects the behavior in production. The authority that approved the original deployment approved a system that no longer exists in its original form. No authority approved the current behavior because no governance process evaluated the drift.

Calibration drift and distribution shift produce outputs whose statistical meaning changes without any visible signal. A confidence score of 0.85 may have meant one thing at deployment and something substantially different six months later. The authority that established threshold policies based on deployment-time calibration is governing based on assumptions that may no longer hold. The authority’s decisions remain in force. The foundation for those decisions has shifted.

The technical pattern is consistent: AI systems distribute decision logic across computational, temporal, and organizational dimensions in ways that make authority attribution structurally impossible without explicit governance architecture.

Structural Fragility: Where Authority Fails

Authority structures for AI systems rest on assumptions that appear functional at deployment and degrade systematically under production conditions.

The first assumption is hierarchical sufficiency. Organizations assume that existing management hierarchy provides adequate decision authority for AI systems. A vice president who oversees a business unit assumes authority over AI systems deployed within that unit. But the system’s decision surface may span multiple business units, multiple data domains, and multiple compliance jurisdictions. Hierarchical authority does not map to system scope. The authority is real. The coverage is insufficient.

The second assumption is role-based competence. Organizations assume that the team operating the system has the competence to govern its decisions. Platform engineers who manage infrastructure have operational expertise but may lack domain expertise to evaluate whether AI outputs are appropriate. Business users who consume outputs have domain expertise but may lack technical expertise to assess whether the model is operating within its valid range. Competence is distributed. Authority that requires both technical and domain judgment is assigned to neither.

The third assumption is accountability inheritance. Organizations assume that deployment approval creates ongoing accountability. The executive who approved the AI system’s deployment is accountable for its existence. But accountability for ongoing decision quality, output interpretation, threshold management, and operational drift requires continuous governance engagement — not one-time approval. The deployment authority approved the system at a point in time. The system’s decisions continue indefinitely. The accountability gap widens with each production day.

The fourth assumption is implicit escalation. Organizations assume that when AI systems produce problematic outputs, someone will escalate. In practice, escalation requires defined triggers, defined escalation paths, and defined authority to act on escalation. Without these structures, problematic outputs are either accepted as normal variance, worked around through informal processes, or ignored because no one has the authority to stop the system.

The authority remains. The conditions it was designed for change. The gap between authority and operational reality widens.

Organizational Failure Patterns

Organizations do not deliberately create authority vacuums. They deploy AI systems within existing governance frameworks that were designed for human decision processes — and discover that those frameworks do not map to algorithmic decision-making.

The most pervasive failure pattern is authority diffusion. Multiple teams share responsibility for different aspects of the AI system. Engineering owns the model. Operations owns the infrastructure. Business owns the use case. Compliance owns the risk assessment. Security owns the threat surface. Each team governs its domain. No team governs the system’s decisions as an integrated whole. When the system produces a harmful output, each team can demonstrate that it fulfilled its specific responsibility. No team can demonstrate that it held authority over the decision that caused harm.

The second failure pattern is authority by proximity. The person closest to the AI system’s outputs becomes the de facto decision authority — not through formal assignment but through operational proximity. An analyst who reviews AI-generated risk scores becomes the authority on those scores. A customer service representative who acts on AI recommendations becomes the authority on those recommendations. These individuals have neither the formal authority nor the structural support to govern these decisions. They govern by default because no one else does.

The third failure pattern is authority without capability. An executive is assigned formal authority over an AI system through a governance charter or risk register. The assignment is documented. The executive lacks the technical understanding to evaluate model behavior, the operational visibility to monitor output quality, and the structural mechanisms to intervene when the system underperforms. Authority exists on paper. Governance capability does not exist in practice.

The fourth failure pattern is authority avoidance. When AI system decisions are consequential and potentially controversial, individuals and teams actively avoid holding decision authority. Engineering defers to business. Business defers to compliance. Compliance defers to legal. Legal defers to the board. The deferral chain produces a governance framework where everyone has input and no one has authority. The system operates in a governance vacuum sustained by institutional risk aversion.

These are governance failures — not leadership failures. The individuals involved act rationally within structures that do not provide clear authority. The structure is the failure.

AI Security Implications

Undefined decision authority creates security exposure that extends beyond technical vulnerability into structural defenselessness.

When no one holds authority over an AI system’s decisions, no one holds authority to respond when those decisions are compromised. An adversarial attack that manipulates model inputs to produce controlled outputs succeeds not only because the technical defense was insufficient but because no one had the authority to recognize the attack, assess its impact, and suspend the system. The security incident escalates because the authority to act does not exist at the speed the incident requires.

Authority fragmentation creates response paralysis. When an AI system exhibits behavior consistent with adversarial manipulation, multiple teams must coordinate to assess and respond. Without clear decision authority, coordination becomes negotiation. Each team evaluates the incident from its perspective. Engineering assesses technical indicators. Security assesses threat intelligence. Operations assesses business impact. No one has the authority to override the others and make a binding decision. The system continues to operate while the coordination process unfolds.

Insider threat models must account for authority gaps. An individual who understands that no one holds clear authority over an AI system can exploit that vacuum — modifying model behavior, adjusting thresholds, or altering data inputs with reduced probability of detection. The absence of authority means the absence of monitoring for authority violations. No one is watching because no one has been assigned to watch.

Where vendor defaults define system behavior without organizational authority override, the vendor’s configuration decisions become security-relevant decisions made outside the organization’s security governance. Decision authority that extends to vendor configuration is essential. Without it, the organization’s security posture is partially defined by entities with no security accountability to the organization.

Compliance and Accountability Implications

Compliance frameworks require organizations to demonstrate accountability for automated decisions. Accountability requires authority. Without defined authority, compliance produces documentation that describes processes but cannot demonstrate who is responsible for outcomes.

Accountability for AI decisions requires named authority at specific decision points: who approved the system’s deployment, who governs its ongoing operation, who is responsible for output quality, who has authority to modify thresholds, who can override outputs, and who can suspend the system. When these authorities are undefined, accountability becomes retrospective — assigned after an incident rather than embedded in the governance architecture.

Auditability requires authority traceability. An audit that examines an AI decision must be able to trace not only what data entered the system and what output was produced but who held authority over that decision at the time it was made. Without authority traceability, the audit identifies what happened but cannot identify who was responsible. The audit is complete in description. It is absent in accountability.

Proportionality requires authority judgment. Determining whether an AI system’s decisions are proportionate to their purpose and impact requires someone with the authority and competence to make that assessment. Without defined authority, proportionality assessment becomes a theoretical exercise — described in policy but never operationally enforced because no one holds the authority to enforce it.

Compliance is operational enforceability — not documentation. Authority that exists in governance charters but not in operational architecture produces compliance records that describe an accountability structure that does not function.

Production-Environment Reality

Production environments expose authority failures through operational patterns that accelerate with system complexity and organizational scale.

Transformation chains that process AI outputs through multiple systems distribute decision responsibility across system boundaries. The AI system produces an output. A business rule modifies it. A workflow routes it. A human acts on it. Decision authority for the final action is distributed across all these steps. No single authority governs the full chain.

Hybrid workflows that combine AI outputs with human judgment create accountability ambiguity that authority frameworks must explicitly address. When a human modifies an AI output, who holds authority over the combined decision — the human who modified it, the governance authority over the AI system, or both? Without explicit definition, the answer defaults to neither.

Model versioning creates temporal authority gaps. An authority who approved Model Version 1 may not have evaluated Model Version 2. The authority’s approval applies to a system state that no longer exists. Governance architecture must define whether authority approval transfers across model versions or requires reauthorization.

Monitoring obligations require authority to act on monitoring findings. Organizations that monitor AI systems for drift, bias, and performance degradation must define who has the authority to act when monitoring detects problems. Monitoring without response authority produces awareness without governance — the organization knows the system is degrading but no one has the structural authority to intervene.

Integration pressure compresses authority review cycles. When new AI capabilities must be deployed rapidly, authority assignment is deferred or simplified. The system enters production with provisional authority structures that are rarely formalized retroactively.

Vendor policy evolution affects the scope of organizational authority without organizational control. When vendors modify system behavior through updates, the organizational authority’s governance assumptions may no longer reflect system reality. Authority must extend to vendor change management — or accept that vendor changes operate outside governance.

Governance Architecture for Decision Authority

Decision authority governance requires explicit architectural design — not organizational assumption.

Named decision authority. Every AI system must have a named individual or role with explicit authority over the system’s decision scope. This authority governs what the system is permitted to decide, under what constraints, and with what accountability.

Interpretation authority. A defined authority must govern how the system’s outputs are interpreted. This is separate from operational access. Using the system does not confer authority to interpret its outputs for consequential decisions.

Threshold authority. The individual or role with authority to set, modify, and review decision thresholds must be explicitly defined. Threshold decisions are governance decisions. They must be made by governance authority, not by engineering convenience.

Override authority. Defined authority must exist to override system outputs when they conflict with domain judgment, governance policy, or operational reality. Override authority must be accessible at operational speed — not deferred to committee review.

Escalation architecture. Triggers for escalation must be defined, escalation paths must be documented, and escalation authority must be assigned. Escalation that depends on individual initiative rather than structural design does not function under pressure.

Suspension and termination authority. Named authority must exist to suspend or terminate AI system operation when governance conditions are violated. This authority must be exercisable without requiring consensus from all stakeholders.

Accountability assignment. For every AI decision that produces consequential outcomes, the governance architecture must identify who is accountable — not retrospectively, but prospectively. Accountability assigned after failure is punishment. Accountability assigned before operation is governance.

Authority validation. Decision authority must be periodically validated — confirming that assigned authorities still hold the competence, visibility, and structural mechanisms required to exercise their authority effectively.

This framework does not eliminate authority gaps. It prevents authority gaps from becoming institutional failure.

Doctrine Closing

Decision authority is not an organizational chart problem. It is a governance architecture problem.

Organizations that deploy AI systems without designed decision authority deploy systems that govern themselves. The system decides. The organization reacts. Accountability is assigned after the fact because it was never assigned before the system began.

Governance does not make AI decisions correct. It makes them attributable, reviewable, and reversible — because someone holds the authority and the accountability to ensure they are.

If no one has the right to decide, the system decides for everyone. Governance architecture exists to ensure that does not happen by default.