Decision Flow Architecture in Complex AI Systems

Complex AI systems do not produce isolated outputs. They execute decision flows — sequences of interdependent decisions where each output triggers, constrains, or amplifies the decisions that follow. A classification feeds a scoring model. A score triggers a routing decision. A routing decision initiates an automated action. An automated action modifies operational state.

Each step in this sequence is individually defensible. The classification is statistically sound. The scoring model is properly calibrated. The routing logic follows defined rules. The automated action executes its specification. In isolation, each component functions correctly.

The structural risk is not in any single component. It is in the flow.

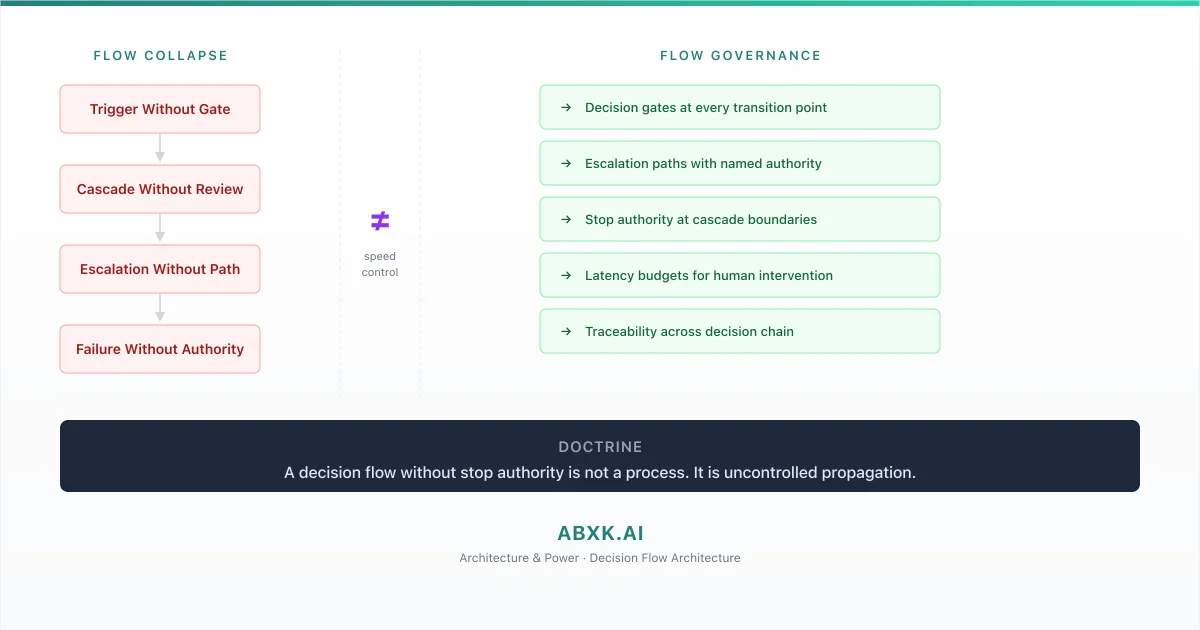

When a decision flow lacks governance architecture — defined gates, escalation paths, stop authority, and intervention mechanisms — it operates as an uncontrolled propagation chain. An error at any stage does not remain at that stage. It cascades. By the time the error becomes visible through operational impact, it has been amplified through every subsequent decision layer. The original misclassification has become institutional action.

Organizations that deploy complex AI systems without decision flow architecture deploy propagation infrastructure without control mechanisms. This is not an engineering oversight. It is a governance architecture failure.

AI Governance exists to define where decisions may propagate, under what authority, with what constraints, and through what intervention mechanisms. Without that architecture, AI Risk Management, AI Security, and AI Compliance operate at the component level while failure operates at the flow level.

Decision flow failure does not occur at the model layer. It occurs at the transition layer — where one decision becomes the input to the next without structural review.

Technical Foundations: How Decision Flows Create Cascade Risk

Decision flows emerge from system composition — the architectural pattern of connecting multiple AI components into integrated processing chains. Understanding the mechanics of composition reveals why cascade risk is inherent, not incidental.

In a single-model deployment, the decision boundary is defined by one model’s learned parameters. The model receives an input, applies its decision function, and produces an output. Governance for that output can be designed around the model’s known characteristics — its calibration, its training distribution, its failure modes.

Decision flows replace this single-boundary pattern with multi-boundary chains. Each model in the chain operates on inputs that include outputs from upstream models. The downstream model was not trained on the upstream model’s errors. It was trained on clean data representing ideal inputs. When the upstream model produces an output at the boundary of its confidence range — an output that is technically within tolerance but statistically uncertain — the downstream model processes it as if it were ground truth.

This creates error amplification through false certainty. The upstream model’s uncertainty is not propagated. Only its point estimate is transmitted. The downstream model has no mechanism to distinguish between a high-confidence upstream output and a marginal one. It processes both identically. Marginal accuracy at the first stage becomes treated as definitive input at the second stage.

Temporal coupling compounds the cascade dynamic. In real-time decision flows, the latency between stages is measured in milliseconds. A classification triggers a score. The score triggers a routing decision. The routing decision triggers an action. The entire chain executes before any human review is structurally possible. The system’s decision velocity exceeds the organization’s intervention velocity by orders of magnitude.

Feedback loops within decision flows create self-reinforcing error patterns. When a downstream action modifies the environment that produces future inputs to the upstream model, errors become cyclical. A misclassification triggers an action. The action changes the data distribution. The changed distribution increases the probability of future misclassification. The flow does not correct the error. It institutionalizes it.

Ensemble and multi-agent architectures distribute decision logic across components that may operate asynchronously, on different data, with different update cycles. The aggregated decision is an emergent property of component interactions that no single governance framework monitors. The decision flow produces outputs that no individual component authored and no governance authority evaluated as a whole.

The technical pattern is consistent: composition creates cascade surfaces that are invisible at the component level and uncontrollable without flow-level governance architecture.

Structural Fragility: Where Decision Flows Break

Decision flow architectures rest on assumptions that appear valid at design time and degrade systematically under production conditions.

The first assumption is output stability. Decision flows are designed assuming that upstream models produce outputs within expected ranges. In production, distribution shift, adversarial input, and data quality degradation cause upstream outputs to drift toward boundary conditions — regions where the model’s decision is statistically marginal. These boundary outputs are technically valid. They are functionally unreliable. The downstream model cannot distinguish between them and high-confidence outputs. The flow continues. The foundation weakens.

The second assumption is error isolation. System architects assume that errors at one stage remain contained — that a misclassification affects only the immediate downstream step. In practice, decision flows create error propagation paths where a single upstream error affects every subsequent stage. The error does not diminish as it propagates. It transforms. A marginal classification becomes a definitive score. A questionable score becomes a routing decision. A routing decision becomes an automated action. The original uncertainty has been laundered through sequential processing into apparent certainty.

The third assumption is temporal consistency. Decision flows assume that the models operating at different stages share a consistent temporal context — that they were trained on compatible data reflecting comparable time periods. In production, models are updated on different schedules. An upstream model retrained on recent data produces outputs that reflect current patterns. A downstream model trained on historical data interprets those outputs through outdated assumptions. The flow is internally inconsistent. No component-level monitoring detects the inconsistency because each model is valid in isolation.

The fourth assumption is graceful degradation. System designers assume that when one component underperforms, the overall flow degrades proportionally. In practice, decision flows exhibit nonlinear failure characteristics. A small degradation in upstream accuracy can produce disproportionate downstream impact when it pushes outputs across decision boundaries in downstream models. The flow does not degrade gracefully. It fails at thresholds — and the location of those thresholds is emergent, not designed.

The outputs remain. The structural coherence between them erodes. No single component signals the failure because no single component owns the flow.

Organizational Failure Patterns

Organizations that deploy complex AI systems structure governance around components — individual models, individual teams, individual risk assessments. Decision flows cross these governance boundaries without structural recognition.

The most pervasive failure pattern is component-level governance applied to flow-level risk. Each model in the decision flow has a model owner, a risk assessment, and a monitoring framework. No governance structure owns the transitions between models. The classification model is governed. The scoring model is governed. The transition from classification to scoring — where the classification output becomes the scoring input — is ungoverned. This transition is where cascade risk originates.

The second failure pattern is velocity-governance mismatch. Decision flows execute at computational speed. Governance processes operate at organizational speed. When a decision flow produces a harmful outcome, the flow has already propagated through all downstream stages and produced operational impact before any governance mechanism can intervene. The organization’s governance framework was designed for human decision cadence. The system operates at machine decision cadence. The mismatch is structural, not procedural.

The third failure pattern is accountability fragmentation across flow boundaries. When a decision flow produces a harmful outcome, accountability traces back through the chain. The classification team demonstrates their model performed within specification. The scoring team demonstrates their model performed within specification. The automation team demonstrates their system executed correctly. Every component operated as designed. The harmful outcome is an emergent property of their interaction. No team is accountable for the interaction because no team governs it.

The fourth failure pattern is invisible escalation failure. Decision flows often include nominally defined escalation triggers — conditions under which the flow should pause for human review. In practice, these triggers are set at component level. Each component’s escalation threshold is calibrated for that component’s individual performance characteristics. No escalation trigger monitors the cumulative uncertainty across the full flow. A sequence of individually acceptable outputs can produce collectively unacceptable risk. No escalation fires because no single component breached its threshold.

These are governance architecture failures — not engineering failures. The components work. The flow is ungoverned. The failure emerges from the gap between component governance and flow governance.

AI Security Implications

Decision flows create security exposure that extends beyond individual model vulnerability into systemic attack surfaces.

An adversary who understands the decision flow architecture can target the highest-leverage transition point — the stage where a minimal input perturbation produces maximum downstream cascade. This is not a model attack. It is a flow attack. The adversary does not need to compromise any individual model. They need to introduce an input that produces a marginal output at the first stage — an output within normal tolerance but at the boundary of a downstream decision threshold. The cascade does the amplification.

Decision flows with automated actions create execution chains that an adversary can exploit for indirect control. By manipulating inputs to produce specific upstream outputs, the adversary can trigger specific downstream actions without ever directly interacting with the downstream system. The attack surface is the upstream input. The impact surface is the downstream action. The distance between attack and impact makes detection structurally difficult.

Monitoring at the component level cannot detect flow-level attacks. Each component’s monitoring framework evaluates inputs and outputs against that component’s expected distribution. A flow-level attack produces inputs that are individually normal at every stage. The attack signature exists only in the correlation across stages — a pattern that no component-level monitor is designed to detect.

Response to flow-level security incidents requires flow-level stop authority. When a flow-level attack is detected, the response must halt the entire flow — not just the compromised component. Without defined stop authority that operates across component boundaries, security response is limited to component-level actions while the flow continues to propagate the attack through uncompromised components.

Where decision authority remains undefined across flow boundaries, as examined in the preceding briefing on governance architecture, security response inherits that authority vacuum. No one can halt a flow when no one has been assigned the authority to do so.

Compliance and Accountability Implications

Compliance frameworks require organizations to demonstrate accountability for automated decisions. Decision flows distribute decisions across components in ways that make accountability attribution structurally complex without flow-level governance.

Auditability requires traceability across the full decision flow. An audit that examines the final output of a decision flow must be able to reconstruct the entire chain — which input entered at which stage, which model produced which intermediate output, which transition logic connected which stages, and which thresholds governed which decisions. Without flow-level traceability, the audit can examine individual components but cannot reconstruct the decision that produced the outcome.

Documentation requirements extend beyond model documentation to flow documentation. Documenting individual models is necessary but insufficient. The decision flow’s transition logic, cascade boundaries, escalation triggers, and stop conditions must be documented as architectural elements. The flow is a decision system. It requires system-level documentation, not just component-level documentation.

Proportionality assessment requires flow-level impact analysis. Determining whether an automated decision is proportionate to its purpose requires understanding the full chain of amplification. A classification that is proportionate as an isolated output may become disproportionate when it triggers a scoring cascade that produces an automated denial. Proportionality is a flow property, not a component property.

Accountability assignment requires flow-level authority. When a decision flow produces a harmful outcome, compliance frameworks must be able to identify who held authority over the flow — not just over individual components. Without named flow-level authority, accountability becomes a retrospective attribution exercise where each component team demonstrates individual compliance while no one demonstrates flow-level governance.

Compliance is operational enforceability — not component-level documentation. Enforceability at the flow level requires governance architecture at the flow level.

Production-Environment Reality

Production environments expose decision flow failures through operational patterns that intensify with system complexity, integration depth, and organizational scale.

Transformation chains that span organizational boundaries distribute decision flow stages across teams with different governance frameworks. The data engineering team produces features. The model team produces predictions. The application team consumes predictions and produces actions. Each team governs its stage. The transitions between stages operate in governance gaps. Production decision flows routinely cross three or more organizational boundaries.

Hybrid workflows that inject human review into automated decision flows create temporal bottlenecks that distort flow behavior. When a human review step is inserted between automated stages, the flow’s latency profile changes. Downstream automated stages that were designed for millisecond input cadence now receive batch inputs at human review cadence. The downstream model’s behavior under batch input may differ from its behavior under streaming input. The flow behaves differently than it was designed because the human intervention changed its temporal characteristics.

Model versioning across decision flow stages creates compatibility gaps. When the upstream model is updated to a new version, its output distribution may shift. Downstream models trained to expect the previous output distribution now receive inputs from a different distribution. The flow’s behavior changes without any single model failing. Version compatibility across decision flow stages is a governance requirement that most organizations do not track.

Monitoring obligations at the flow level require metrics that do not exist at the component level — cross-stage correlation, cascade latency, amplification factor, and end-to-end confidence degradation. Organizations that monitor individual models but not decision flows detect component health but miss flow degradation.

Integration pressure drives organizations to add new stages to existing decision flows without re-evaluating the flow’s governance architecture. Each addition increases cascade depth, amplification potential, and accountability fragmentation. The flow grows. The governance architecture remains static.

Vendor components embedded within decision flows introduce governance boundaries that the organization cannot cross. When a vendor model operates as a stage in a decision flow, the organization cannot audit that stage’s internal logic, cannot control its update schedule, and cannot modify its behavior. The flow includes a stage that the organization governs by contract, not by architecture. Vendor policy evolution affects the flow without organizational control.

Governance Architecture for Decision Flows

Decision flow governance requires architectural design that operates at the flow level — not at the component level.

Transition gates. Every transition between decision flow stages must include a defined gate — a structural checkpoint that evaluates the upstream output before it becomes a downstream input. Gates enforce range validation, confidence thresholds, and consistency checks at each transition.

Escalation architecture. Each gate must include defined escalation criteria, a named escalation authority, a response time constraint, and a defined action set. Escalation that depends on individual judgment without structural triggers does not function at production speed.

Stop authority. Named authority must exist to halt the decision flow at any stage. Stop authority must be exercisable at operational speed without requiring consensus from all component owners. A decision flow that cannot be stopped is an uncontrolled propagation chain.

Cascade budget. The maximum allowable amplification factor across the full decision flow must be defined, monitored, and enforced. When cumulative uncertainty across stages exceeds the cascade budget, the flow must escalate or halt — regardless of individual component health.

Latency architecture for intervention. Where human review is a governance requirement, the decision flow must be architecturally designed to accommodate intervention latency. This means buffering, queuing, or staging mechanisms that allow human review without distorting downstream behavior.

Flow-level monitoring. Monitoring must include cross-stage correlation metrics, end-to-end traceability, cascade depth tracking, and amplification measurement. Component-level monitoring is necessary but insufficient.

Version compatibility governance. Model updates at any stage must be evaluated for flow-level impact before deployment. Version compatibility across stages is a governance decision, not an engineering convenience.

Flow documentation and traceability. The decision flow’s architecture — its stages, transitions, gates, escalation paths, stop conditions, and authority assignments — must be documented as a governed system artifact, continuously updated as the flow evolves.

This framework does not eliminate cascade failure. It prevents cascade failure from becoming institutional failure.

Doctrine Closing

Decision flow architecture is not an engineering optimization problem. It is a governance architecture problem.

Organizations that govern individual models but not the flows between them govern the components while leaving the system ungoverned. Each component operates within specification. The flow operates without structural control. When cascade failure occurs, it is not because any component failed. It is because no one governed the transitions.

A decision flow without stop authority is not a process. It is uncontrolled propagation. Governance architecture exists to ensure that every transition has a gate, every gate has an authority, and every authority has the structural capability to intervene.