Data Lineage Is the Missing Layer in AI Governance

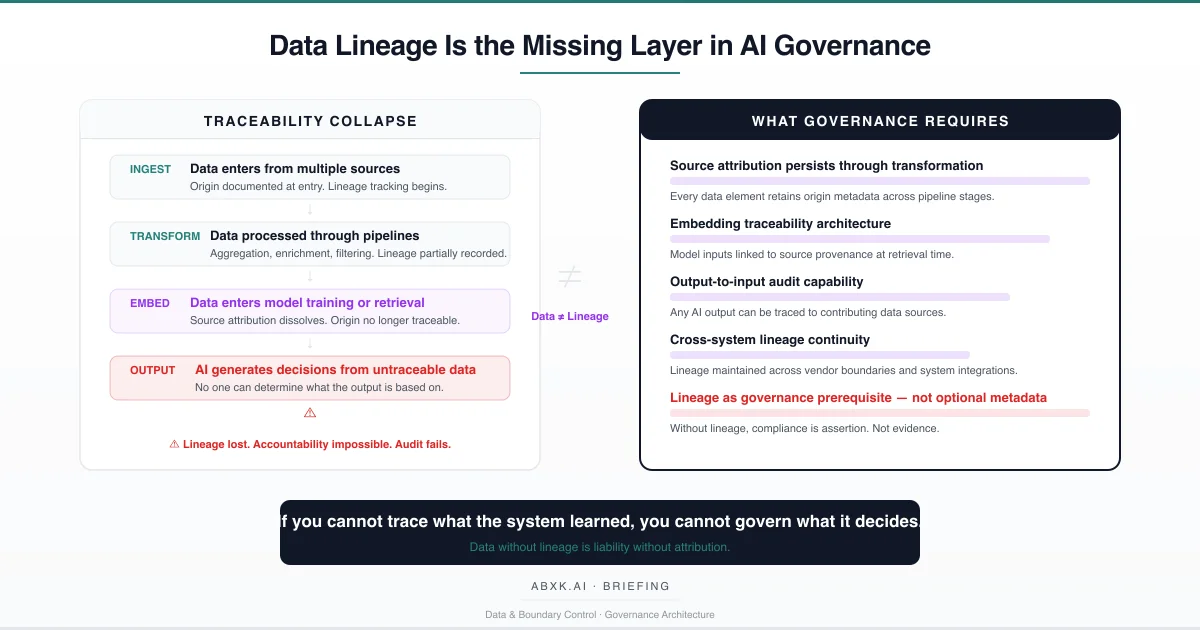

AI systems consume data. They transform it, embed it, combine it, and produce outputs that influence decisions. At each stage, the connection between output and origin weakens. In most production environments, it breaks entirely.

This is not a metadata management problem. It is a governance architecture failure.

Data lineage — the ability to trace any AI output back to the specific data sources, transformations, and decision points that produced it — is the structural prerequisite for every other governance function. Without lineage, compliance is assertion without evidence. Audits produce documentation without substance. Risk assessments evaluate systems whose actual data foundations are unknown.

AI Governance depends on the ability to answer a fundamental question: what data did this system use, from where, under what authority, and through what transformations? When that question cannot be answered, governance does not degrade incrementally. It collapses structurally.

Data lineage does not fail at the tooling layer. It fails at the architecture layer — where systems were designed to process data efficiently but not to preserve its provenance across the decision chain.

Understanding that distinction is central to AI Risk Management, AI Security, AI Compliance, and Responsible AI implementation in production environments.

Technical Foundations: How Lineage Dissolves

Data enters AI systems through structured and unstructured channels. It arrives with metadata — timestamps, source identifiers, consent records, quality annotations. In well-governed data warehouses, this metadata persists through standard transformations. The lineage chain remains intact.

AI systems introduce a fundamentally different processing architecture. Data does not merely move through pipelines. It is transformed into representations that have no structural relationship to their source material.

Training data is consumed during model development. Individual data points contribute to weight adjustments across millions or billions of parameters. Once training completes, no mechanism exists to determine which specific data influenced which specific output. The contribution is distributed, non-linear, and statistically irreversible. The data is present in the model’s behavior. It is absent from its auditability.

Retrieval-augmented generation introduces a different lineage challenge. Data is converted into vector embeddings — mathematical representations that capture semantic similarity but discard structural metadata. When a query retrieves relevant embeddings, the system selects based on proximity in vector space. The original document, its source, its creation date, its authorization status, its consent basis — these attributes exist only if the embedding architecture was specifically designed to preserve them. Most are not.

Fine-tuning compounds the problem. A model adjusted on domain-specific data inherits the lineage obligations of that data. If the fine-tuning dataset contained information subject to access restrictions, consent limitations, or temporal validity constraints, those obligations transfer to every subsequent output. But the fine-tuned model carries no record of what it absorbed. The restrictions apply. The traceability does not exist.

Calibration drift introduces temporal lineage failure. A model calibrated on data from one period produces outputs that reflect that period’s distributions. As the underlying reality shifts, the model’s outputs increasingly reflect historical data rather than current conditions. Without lineage, no one can determine when the model’s foundational data became stale — or which outputs are affected.

The technical pattern is consistent: AI systems are optimized for inference performance. Lineage preservation requires architectural investment that competes directly with processing efficiency. In the absence of governance mandates, efficiency wins. Lineage is treated as optional metadata rather than structural infrastructure.

Structural Fragility: Where Traceability Breaks

The fragility of data lineage in AI systems follows a predictable degradation pattern. It begins with assumptions that appear reasonable at deployment and erode systematically under production conditions.

The first assumption is source stability. Organizations design lineage systems around known data sources with established schemas and metadata standards. In production, data sources multiply. New feeds are integrated. Third-party data arrives through vendor APIs with undisclosed provenance. The lineage architecture designed for five data sources encounters fifty. Coverage gaps emerge silently.

The second assumption is transformation transparency. Standard ETL pipelines produce deterministic outputs from known inputs. AI transformation chains include probabilistic steps — sampling, augmentation, synthetic generation, embedding. Each probabilistic step introduces a lineage discontinuity. The output cannot be deterministically traced to a specific input. It can only be attributed to a distribution of possible inputs.

The third assumption is single-system scope. Lineage architectures typically operate within a single platform or data environment. Production AI systems span multiple platforms, cloud providers, vendor services, and internal applications. Data crosses organizational boundaries. It enters systems governed by different policies, different retention rules, different consent frameworks. Lineage that terminates at the organizational boundary provides traceability within the organization but blindness beyond it.

The fourth assumption is metadata durability. Data enters systems with rich metadata. As it flows through processing stages, metadata is selectively preserved, summarized, or discarded. By the time data reaches the model layer, the metadata that governance requires — consent status, access authorization, temporal validity, quality certification — has been stripped to reduce storage costs and processing overhead.

The structural fragility is not that lineage is technically impossible. It is that lineage preservation must be designed into every layer of the data architecture, from ingestion through transformation through embedding through output. Any layer that does not actively maintain lineage becomes a permanent gap in the traceability chain. One gap is sufficient to make the entire chain unauditable.

The number remains. The meaning changes. The origin disappears.

Organizational Failure Patterns

Organizations do not deliberately abandon data lineage. They adopt AI systems that were never designed to provide it, and then build governance frameworks that assume it exists.

The most common failure pattern is compliance assertion without evidence. Organizations document their data governance policies. They describe data classification schemes, access controls, and retention policies. They assert that AI systems operate within these boundaries. When auditors request evidence that a specific AI output was produced from data that met classification, consent, and quality standards, the evidence does not exist. The assertion is structurally unsupported.

The second failure pattern is retrospective lineage construction. After an incident — a biased output, a privacy violation, a regulatory inquiry — organizations attempt to reconstruct what data influenced the problematic output. In traditional systems, this reconstruction is feasible through transaction logs and audit trails. In AI systems where data has been embedded, fine-tuned, or probabilistically transformed, reconstruction produces approximations at best. The actual causal chain is unrecoverable.

The third failure pattern is vendor lineage delegation. Organizations using external AI services assume the vendor maintains data lineage. Vendor terms of service rarely guarantee lineage preservation. Vendor architectures rarely support output-to-input tracing across customer boundaries. The organization’s compliance obligation remains. The vendor’s lineage capability does not satisfy it.

The fourth failure pattern is lineage scope reduction. Organizations implement lineage for structured data in traditional analytics platforms and declare data governance complete. AI systems — particularly generative models, retrieval-augmented architectures, and multi-agent systems — operate on unstructured data that falls outside the lineage architecture entirely. The governance coverage appears comprehensive. The actual coverage excludes the systems with the highest risk exposure.

These are governance failures, not tooling failures. Organizations possess the technical capability to implement comprehensive data lineage. They choose architectures, vendors, and deployment timelines that make lineage preservation structurally impractical.

AI Security Implications

Data lineage gaps create security vulnerabilities that extend beyond data protection into decision integrity.

When an organization cannot trace what data influenced an AI output, it cannot determine whether that data was legitimate. Data poisoning — the deliberate introduction of manipulated data into training sets, retrieval databases, or fine-tuning datasets — exploits lineage blindness directly. The poisoned data enters through the same channels as legitimate data. Without lineage, the organization cannot distinguish between them. Without attribution, the organization cannot identify which outputs were affected.

Adversarial data injection becomes structurally undetectable in systems without lineage. An attacker who introduces corrupted data into a retrieval-augmented system influences outputs without triggering any security alert. The system functions normally. The outputs appear valid. The corruption persists until someone independently verifies the output against a trusted source — a verification step that most operational workflows do not include.

Cross-system data propagation amplifies the security exposure. Data from one AI system feeds into another. Outputs from generative systems become inputs to analytical systems. Without lineage, the propagation chain is invisible. A security compromise in one system silently corrupts downstream systems through data flows that no security architecture monitors because no lineage architecture maps them.

The attack surface is not the model. The attack surface is the data pipeline — and lineage is the only mechanism that makes that pipeline visible to security operations.

Compliance and Accountability Implications

Compliance frameworks increasingly require organizations to demonstrate what data influenced automated decisions. This is not a documentation exercise. It is an operational capability requirement.

Auditability without lineage produces documentation that describes processes but cannot substantiate outcomes. An organization can document that it maintains data quality standards. It cannot demonstrate that a specific AI output was produced from data meeting those standards if the connection between output and input data has been severed by the processing architecture.

Proportionality assessment requires understanding what data a system actually uses. Compliance frameworks require that data usage is proportionate to the stated purpose. Without lineage, organizations cannot verify proportionality because they cannot enumerate the actual data contributing to system behavior. They can describe intended data usage. They cannot prove actual data usage.

Consent management in AI systems requires lineage as infrastructure. When data subjects exercise rights regarding their data, the organization must identify where that data exists, how it has been used, and what outputs it has influenced. In traditional systems, this is achievable through database queries and access logs. In AI systems where data has been embedded into model weights or vector stores, the data subject’s information may influence outputs indefinitely with no mechanism for identification or removal. Lineage is the only architecture that makes consent operationally enforceable rather than theoretically acknowledged.

Accountability requires attribution. When an AI system produces a harmful output, accountability demands that the organization can identify what went wrong, where, and why. Without lineage, incident investigation reaches a structural dead end: the output exists, the harm is documented, but the causal chain between data input and problematic output is irretrievable. Accountability becomes institutional assertion rather than operational evidence.

Lineage failure transforms governance from operational accountability into documented intention — the same structural gap that enables output migration and lifecycle persistence without review.

Compliance is operational enforceability — not documentation.

Production-Environment Reality

Production environments accelerate lineage degradation through operational patterns that individually appear manageable and collectively destroy traceability.

Transformation chains in production extend beyond design specifications. Data passes through preprocessing, feature engineering, embedding generation, model inference, post-processing, and output formatting. Each stage may be maintained by a different team, operated on a different platform, and governed by different policies. Lineage architecture that does not span the full chain produces partial traceability that is operationally equivalent to no traceability when audit questions require end-to-end attribution.

Hybrid workflows combining human judgment with AI outputs create lineage complexity that most architectures do not address. When a human analyst modifies an AI output before it enters another system, the downstream data reflects both the AI system’s contribution and the human modification. Without lineage that captures this interaction, subsequent analysis cannot separate human judgment from AI inference. Accountability for the combined output is ambiguous.

Model versioning without data versioning creates temporal lineage gaps. Organizations maintain version control for model code and weights. They rarely maintain equivalent version control for the data that trained, fine-tuned, or populated each model version. When a model is rolled back, the data environment is not rolled back with it. The model version is traceable. The data state it relies on is not.

Monitoring obligations require lineage to be meaningful. Organizations monitor AI systems for drift, bias, and performance degradation. Without lineage, monitoring detects symptoms but cannot diagnose causes. A model producing biased outputs may be reflecting biased training data, biased retrieval data, or biased post-processing logic. Without lineage, the monitoring system identifies the problem but cannot locate its origin.

Integration pressure from business operations compresses the time available for lineage implementation. New data sources are connected. New model versions are deployed. New vendor integrations are activated. Each integration creates new lineage requirements. When deployment velocity exceeds lineage architecture capacity, gaps accumulate. They are rarely closed retroactively.

Vendor policy evolution affects lineage continuity without organizational visibility. When a vendor changes its data processing practices, updates its model architecture, or modifies its retention policies, the lineage assumptions embedded in the organization’s governance framework become invalid. Without lineage that extends across vendor boundaries, these changes are invisible until they surface as compliance failures.

Governance Architecture for Data Lineage

Data lineage governance requires architectural commitment at the infrastructure layer, not policy directives at the documentation layer.

Lineage as architectural requirement. Every data pipeline, transformation process, and model integration must be designed to preserve source attribution, transformation history, and authorization metadata. Lineage is not a reporting feature added after deployment. It is a structural requirement that shapes system design.

Source attribution persistence. Every data element must retain traceable connection to its origin throughout the processing chain. When transformation makes direct attribution impossible, probabilistic attribution with documented confidence levels provides governance-grade traceability.

Embedding traceability architecture. Systems that convert data into embeddings, model weights, or other non-reversible representations must maintain parallel metadata structures that link representations to source data. The representation may be mathematically irreversible. The metadata linkage must not be.

Output-to-input audit capability. Any AI output must be traceable to the contributing data sources, transformation steps, and model versions that produced it. This capability must be operational — executable within audit timelines — not theoretical.

Cross-boundary lineage continuity. When data crosses organizational, vendor, or platform boundaries, lineage must be maintained through contractual requirements, API-level metadata propagation, and independent verification mechanisms.

Temporal lineage management. Lineage must include temporal dimensions — when data was ingested, when it was transformed, when it was used for training or retrieval, and when it influenced specific outputs. Temporal lineage enables retrospective analysis when data validity periods expire.

Lineage monitoring and alerting. Automated systems must detect lineage gaps — points where data enters the processing chain without adequate source attribution or exits without output-to-input traceability. Gaps trigger review, not default acceptance.

Suspension authority for lineage failures. When lineage cannot be established for a data source, a processing step, or a model output, defined authority must exist to suspend the affected system component until traceability is restored. Continued operation without lineage is continued operation without accountability.

This framework does not eliminate lineage risk. It prevents lineage failure from becoming institutional failure.

Doctrine Closing

Data lineage is not a metadata management problem. It is a governance architecture problem.

Organizations that deploy AI systems without lineage architecture deploy systems they cannot audit, cannot explain, and cannot hold accountable. They can document policies. They cannot demonstrate compliance. They can assert governance. They cannot prove it.

Governance does not make systems perfect. It makes failures traceable — and traceability is the structural foundation on which every other governance function depends.

Without lineage, AI governance is a framework without evidence. A claim without proof. A structure without foundation.

If you cannot trace what the system learned, you cannot govern what it decides.