The Confidence Illusion in AI Risk Scoring Systems

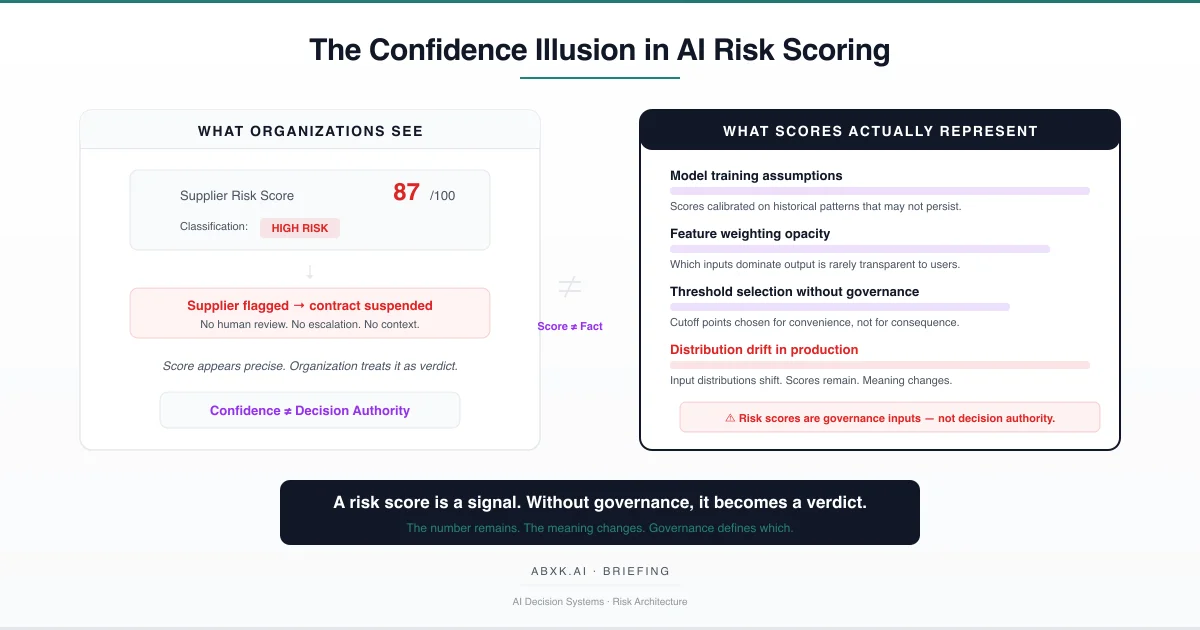

AI risk scoring systems present numerical outputs with apparent precision. A supplier scores 87. A contract scores 4.2. A transaction flags as high-risk. Different scales. Same structural problem: numerical outputs treated as stable truth. The numbers appear definitive. The underlying reliability is conditional.

In production AI systems, conditional reliability is not a statistical nuance. It is a governance problem.

Risk scores operate inside assumptions about model calibration, training distribution, feature relevance, and input stability. When those assumptions shift — and in production environments, they shift continuously — the meaning of the score shifts with them. The number remains stable. The inferential basis has changed.

AI Governance exists to define how probabilistic systems are interpreted under accountability. Without governance architecture, risk scores migrate from advisory signals into operational verdicts without structural safeguards. Suppliers are disqualified, contracts are flagged, transactions are blocked — based on scores whose reliability conditions are unexamined.

Risk scoring does not fail primarily at the technical layer. It fails at the decision layer — where organizations treat model output as objective fact, where threshold selection substitutes for judgment, and where no governance structure mediates between statistical signal and institutional action.

Understanding that distinction is central to AI Risk Management, AI Security, and Responsible AI implementation in production environments.

Risk Scoring as Probabilistic Inference

Most explanations describe risk scoring as objective assessment:

“We measure risk factors. We compute a score. The score tells you the risk.”

This framing is structurally incomplete. It reduces a complex inference problem to a measurement exercise, which obscures the conditions under which scoring becomes unreliable.

Risk scoring systems are statistical inference systems operating under uncertainty. They ingest structured and unstructured inputs — financial indicators, behavioral patterns, historical records, external signals — and produce numerical estimates of risk likelihood or severity. The output is probabilistic. The presentation is deterministic.

The distinction matters. A risk score of 87 does not mean the entity is 87 percent dangerous. It means that the model, given its training data, its feature weights, and its calibration assumptions, estimates elevated risk under current conditions. Change the training data, the feature weights, or the conditions, and the same entity may score 54.

Three structural properties define — and constrain — risk scoring reliability:

Calibration state. Risk scores are calibrated against historical outcomes. When the relationship between input features and actual risk changes — through market shifts, behavioral adaptation, or environmental factors — the calibration degrades. The model continues to produce scores. The scores no longer carry the same inferential weight.

Feature relevance. Risk models weight input features based on historical correlation with outcomes. Feature relevance is not static. Variables that predicted risk in one context may carry no predictive value in another. When feature relevance shifts without model update, the scoring system applies obsolete logic to current conditions.

Distribution stability. Risk scores assume that the distribution of inputs during inference resembles the distribution during training. In production environments, input distributions shift continuously. New entity types emerge. Behavioral patterns evolve. Data sources change format, coverage, or quality. Each shift degrades the alignment between model assumptions and operational reality.

Risk scoring is inference under uncertainty — not measurement of objective fact. When organizations treat scores as facts, the governance architecture necessary to interpret uncertainty is absent by design.

The Precision Illusion

Risk scores present a specific cognitive hazard: numerical precision creates the appearance of inferential reliability.

A score of 87 appears more authoritative than a qualitative assessment of “elevated risk.” The specificity of the number implies corresponding specificity in the underlying analysis. This implication is structurally false.

The precision of the output bears no necessary relationship to the reliability of the inference. A model with degraded calibration produces precise scores. A model with irrelevant features produces precise scores. A model operating outside its training distribution produces precise scores. The precision is a property of the output format, not of the analytical validity.

This creates a structural vulnerability in organizational decision-making. Decision-makers interpret precision as evidence quality. They treat the distance between 73 and 87 as meaningful in the same way that the distance between 73 and 87 degrees Celsius is meaningful — as a measurement with defined units and stable referents. Risk scores do not have defined units. Their referents shift with model state, input distribution, and calibration currency.

The number remains. The meaning changes.

When organizations embed risk scores into automated workflows — triggering actions at defined thresholds without human review — the precision illusion operates at institutional scale. The score becomes the decision. The conditions that would justify the decision remain unexamined.

Structural Fragility in Production

Risk scoring systems exhibit specific fragility patterns that intensify in production environments:

Temporal drift. Risk models trained on historical data assume that historical patterns predict future risk. This assumption degrades over time. Entity behavior evolves. Market conditions change. Regulatory environments shift. The model’s representation of risk becomes increasingly disconnected from actual risk as temporal distance from training increases.

Feedback loop contamination. In production systems, risk scores influence organizational behavior — which in turn influences the data that future models train on. Entities flagged as high-risk receive increased scrutiny, which generates more adverse findings, which reinforces the high-risk classification. The scoring system creates the evidence that validates its own output. This is not measurement bias — where the instrument distorts observation. It is intervention bias — where the instrument distorts the system it observes. The distinction matters: measurement bias can be corrected through recalibration; intervention bias is structural and self-reinforcing. This circularity is invisible at the individual score level and structurally significant at the portfolio level.

Threshold ossification. Organizations select risk thresholds during initial deployment — often based on limited operational data or vendor recommendations. These thresholds persist as institutional defaults long after the conditions that justified them have changed. The threshold becomes organizational habit rather than governance decision. Recalibration of thresholds requires the same governance authority as initial threshold selection, but is rarely subject to the same review process.

Aggregation opacity. Risk scores frequently aggregate multiple sub-scores into composite outputs. The aggregation logic — which sub-scores receive what weight, how interaction effects are handled, what happens when sub-scores conflict — is rarely transparent to end users. Decision-makers act on the composite score without understanding which input factors drive it. When a supplier scores 87, the decision-maker cannot determine whether the score reflects financial instability, regulatory exposure, operational fragility, or an artifact of feature weighting decisions made during model development.

These fragilities are structural, not incidental. They are inherent to probabilistic scoring deployed in dynamic environments. Governance architecture must account for them explicitly.

Organizational Failure Patterns

Common structural failures in risk scoring governance follow predictable patterns:

- Score-as-verdict. Organizations treat risk scores as conclusive assessments rather than probabilistic signals. A score above threshold triggers action. A score below threshold clears concern. The ambiguity inherent in probabilistic output is eliminated by policy — not by evidence.

- Threshold over-simplification. Single-threshold systems collapse the full range of probabilistic output into binary classifications. Complex risk profiles are reduced to “acceptable” or “unacceptable” based on cutoff points whose selection lacks governance justification.

- Authority displacement. Risk scores displace human judgment without explicitly reassigning decision authority. The model makes the decision. No individual is accountable for the decision. When the decision produces adverse outcomes, accountability fragments — the model produced the score, the threshold produced the classification, the workflow produced the action. No governance structure connects output to authority.

- Automation without review. Automated risk scoring workflows execute at speed. Review processes operate at human pace. When scoring volume exceeds review capacity, the gap between score production and score evaluation widens. Scores that should trigger review instead trigger action. The governance architecture assumes review capacity that does not exist operationally.

- False equivalence across domains. Risk scores developed for one domain are applied to adjacent domains without recalibration. A scoring model validated for supplier financial risk is extended to supplier operational risk. The numerical format creates the appearance of cross-domain validity. The inferential basis does not transfer.

These are governance failures — not tooling failures. The tools perform as designed. The organizational structures that should interpret, validate, and gate tool output are absent or insufficient.

AI Security Implications

Risk scoring misinterpretation creates structured AI Security exposure:

- Adversarial score manipulation. When organizations disclose — or when adversaries infer — the features that drive risk scores, entities under assessment can modify observable characteristics to reduce scores without reducing actual risk. The scoring system becomes an optimization target for the entities it evaluates.

- False clearance exploitation. Entities that score below risk thresholds receive reduced scrutiny. Adversaries who understand threshold behavior can maintain profiles that avoid detection while conducting activity that the scoring system was designed to identify. The threshold becomes a known constraint that adversarial actors engineer around.

- Cascade vulnerability. Risk scoring systems that feed downstream automated processes create cascade risk. A manipulated score propagates through approval workflows, resource allocation systems, and compliance reporting without independent verification at each stage. A single point of score manipulation produces system-wide effect.

- Model extraction through probing. Repeated interactions with risk scoring systems allow adversaries to map the relationship between inputs and outputs, effectively reconstructing the model’s decision boundaries. This extraction enables targeted evasion that the scoring system cannot detect because it operates within the model’s own logic.

From an AI Security perspective, risk scoring systems that operate without governance architecture are simultaneously decision systems and attack surfaces. The absence of interpretation governance amplifies both roles.

Compliance and Accountability Architecture

Risk scoring systems carry specific AI Compliance obligations that extend beyond technical documentation:

Proportionality. Organizational actions triggered by risk scores must be proportional to the confidence level of the underlying assessment. A score that triggers supplier disqualification requires higher governance justification than a score that triggers enhanced monitoring. When all scores above a single threshold trigger identical consequences, proportionality is absent by design.

Auditability. The complete decision chain — from input data through feature processing, model inference, score production, threshold application, and resulting action — must be reconstructable. Compliance is not satisfied by documenting that a model exists. It requires documenting how the model’s output was interpreted, by whom, under what authority, and with what contextual factors considered.

Contestability. Entities affected by risk score decisions must have access to structured review processes. Contestability requires that the organization can explain — not merely reproduce — the basis for the score. When scoring logic is opaque to the organization itself, contestability is structurally impossible regardless of policy commitments.

Accountability assignment. When risk scores drive institutional decisions, accountability for those decisions must be assigned to individuals with authority to interpret, override, or escalate model output. Accountability that resides with “the model” or “the system” is not governance. It is absence of governance.

Compliance is operational enforceability — not documentation. Documentation that describes governance which does not exist operationally satisfies neither regulatory expectations nor institutional accountability.

Production Environment Reality

Risk scoring behavior in production environments diverges systematically from behavior in development and validation environments:

Input quality degradation. Development environments use curated, cleaned, structured data. Production environments ingest data from operational sources with variable quality, coverage, completeness, and timeliness. Missing fields, stale records, format inconsistencies, and source outages affect score reliability in ways that development testing does not capture.

Volume-driven review collapse. Production scoring volumes routinely exceed the capacity of human review processes. Systems designed with review-before-action architecture degrade to score-as-action architecture under volume pressure. The governance design assumes conditions that production operations do not sustain.

Integration pressure. Production risk scores feed multiple downstream systems — procurement workflows, compliance dashboards, executive reporting, audit documentation. Each integration creates dependency on score availability and consistency. Pausing scoring for recalibration, threshold review, or model update disrupts dependent processes. Integration pressure creates structural resistance to the governance interventions that maintain scoring reliability.

Vendor model evolution. Organizations that rely on vendor-provided risk scoring models are subject to model updates, feature changes, and calibration shifts that occur outside organizational control. Vendor model changes may alter score distributions, threshold behavior, and feature relevance without corresponding changes to organizational governance. The score format persists. The scoring logic has changed.

Hybrid workflow complexity. Production risk scoring frequently operates within hybrid workflows that combine automated scoring, human review, and manual override. The interaction between these components creates governance gaps where accountability is ambiguous. When a human reviewer confirms a model score, accountability transfers to the reviewer. When a reviewer overrides a score, the override requires justification. When the volume of scores prevents meaningful review, neither transfer nor override occurs. The governance architecture exists on paper. The operational reality is unsupervised automation.

Structural Mitigation Framework

Risk scoring systems deployed in production environments require governance architecture that accounts for the structural limitations described above. From an AI Risk Management perspective, risk scoring represents model risk embedded within operational decision processes. Governance must account for both. The following framework defines minimum governance requirements:

Governance Architecture for AI Risk Scoring Systems

- Define score interpretation policy. Establish written guidelines specifying what risk scores mean operationally, what actions they authorize, and what actions they do not authorize. Prohibit automatic enforcement based on score output alone for high-consequence decisions.

- Establish threshold architecture with consequence tiers. Define multiple thresholds for different consequence levels. A score triggering enhanced monitoring should differ from a score triggering investigation, which should differ from a score supporting disqualification or suspension. Each threshold requires independent justification and periodic validation.

- Assign decision authority. Specify who has authority to interpret ambiguous risk scores, who can escalate, and who makes final determinations. Decision authority must include individuals with sufficient domain and technical context to evaluate probabilistic output against operational reality.

- Design escalation paths for ambiguous ranges. Define explicit procedures for scores in boundary zones. Ambiguity must resolve through structured review — not through threshold rounding or individual judgment without governance support.

- Implement calibration monitoring. Track scoring performance against realized outcomes. Establish recalibration schedules and define triggers for emergency recalibration when significant distribution shifts, feature relevance changes, or outcome pattern breaks are detected.

- Require contextual review for high-consequence scores. Risk scores that trigger significant organizational actions must be evaluated alongside domain context, entity history, environmental conditions, and other relevant evidence. Scores interpreted in isolation produce structurally weaker decisions.

- Define suspension and termination authority. The authority to suspend automated risk scoring when governance failures are identified must be predefined, assigned, and executable without organizational delay.

- Document decision pathways. Record the complete decision chain from score output through interpretation, review, escalation, and final action. Documentation must include model version, calibration state, threshold applied, and contextual factors considered.

- Separate signal from verdict. Maintain institutional clarity that risk scores are analytical signals, not evidentiary conclusions. This separation must be reinforced through training, policy, and process design.

This framework does not eliminate scoring error. It prevents scoring error from becoming institutional failure.

Risk Scoring as Governance Architecture

Risk scores are useful. They are not objective.

Scoring systems measure pattern similarity to training data under assumed feature relationships and calibration conditions. They do not measure truth. High scores may indicate genuine elevated risk. They may also indicate training-set bias, feature obsolescence, distribution drift, or threshold artifacts.

The governance failure is not that scoring systems produce errors. All probabilistic systems produce errors. The failure is that organizations deploy scoring systems without defining how errors are identified, contained, and accounted for. The most critical governance primitive is decision authority: who can override a score, under what conditions, and with what accountability.

A risk score is a signal.

Without governance, it becomes a verdict.

The distinction is architectural — not semantic.

Risk scoring is not measurement. It is probabilistic inference under shifting assumptions.

Without governance, scores become verdicts. With governance, scores remain signals.

Risk scoring is not a tooling problem. It is a governance architecture problem. Governance does not eliminate error. It prevents error from becoming institutional failure.

Related: What Text Detection Confidence Actually Means · The Cost Illusion in Applied AI Systems