Automation Bias in Enterprise AI Systems

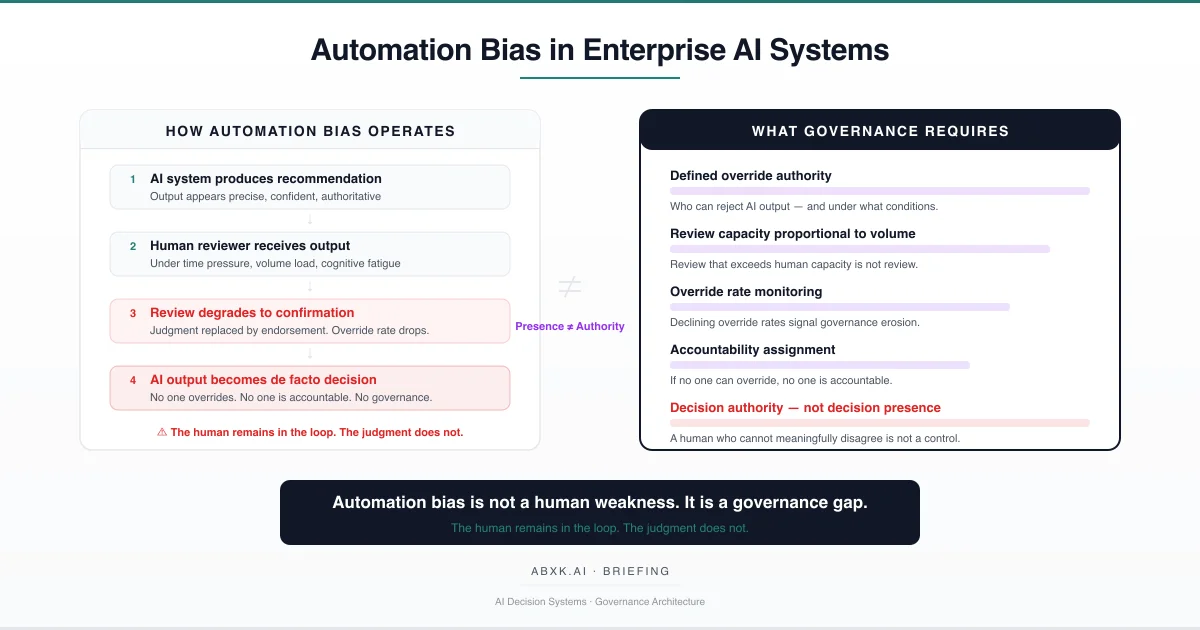

Enterprise AI systems increasingly produce recommendations, classifications, risk assessments, and operational decisions that humans are expected to review. The review architecture assumes that human presence constitutes human judgment. It does not.

In production environments, human reviewers systematically defer to AI output. Not because they are careless. Not because they lack expertise. Because the organizational architecture in which they operate makes independent judgment structurally difficult, professionally risky, and operationally unsupported.

This is automation bias. It is not a cognitive deficiency. It is a governance failure.

AI Governance defines how human judgment operates alongside automated output under accountability. Without governance architecture, the human-in-the-loop becomes a procedural artifact — present in the workflow diagram, absent from the decision process. Review degrades to endorsement. Endorsement carries no accountability. And the organization operates under the structural illusion that its AI decisions are human-reviewed.

Automation bias does not fail at the technical layer. It fails at the decision layer — where organizations design review roles without review authority, where override carries implicit professional cost, and where no governance structure protects the act of disagreeing with automated output.

Understanding that distinction is central to AI Risk Management, AI Security, and Responsible AI implementation in production environments.

The Mechanics of Deference

Automation bias is frequently described as a psychological tendency: humans trust machines. This framing is incomplete. It locates the failure in human cognition rather than in organizational design.

The structural mechanics are more specific. In production AI systems, automation bias operates through identifiable organizational conditions:

Output authority asymmetry. AI systems produce output with apparent precision, consistency, and speed. Human reviewers produce judgment with visible uncertainty, inconsistency, and latency. Organizations that value speed and consistency over deliberation create implicit incentives to defer to the faster, more consistent source — regardless of its actual reliability.

Volume-capacity mismatch. Production AI systems generate recommendations at computational speed. Human review operates at cognitive speed. When output volume exceeds review capacity, reviewers adapt by reducing the depth of individual assessments. Review becomes scanning. Scanning becomes pattern-matching against the AI’s own output. The reviewer confirms what the system suggests rather than evaluating what the system suggests.

Override cost asymmetry. In most enterprise environments, agreeing with AI output carries no professional consequence. Disagreeing with AI output requires justification, documentation, and implicit acceptance of accountability for the alternative decision. The organizational cost of agreement is zero. The organizational cost of disagreement is nonzero. Under sustained production pressure, this asymmetry compounds into systematic deference.

Expertise displacement. As AI systems handle increasing volumes of routine decisions, human reviewers lose the contextual exposure necessary to develop and maintain independent judgment. The expertise required to meaningfully evaluate AI output atrophies through disuse. The reviewer retains the role but loses the capacity. This is not a training problem. It is a structural consequence of how organizations allocate cognitive work.

These mechanics are not independent. They interact. Volume pressure reduces review depth. Reduced review depth increases deference. Increased deference reduces override frequency. Reduced override frequency signals to the organization that the AI system is performing well — which justifies further volume increases. The cycle is self-reinforcing. Governance architecture must interrupt it explicitly.

The Fragility of Review Authority

Review authority is structurally fragile in production AI environments. It degrades along predictable dimensions:

Temporal erosion. Initial deployment often includes careful review processes, adequate staffing, and institutional attention. Over time, operational pressure compresses review timelines, reduces reviewer allocation, and normalizes deference. The review process that existed at deployment bears diminishing resemblance to the review process that operates at production scale.

Contextual deprivation. Reviewers frequently receive AI output without the contextual information necessary to evaluate it independently. A risk classification arrives without the underlying evidence. A recommendation appears without the alternative options considered. A flagged entity is presented without the features that drove the classification. Review without context is not review. It is ratification.

Accountability ambiguity. When a reviewer confirms AI output, accountability for the resulting decision is ambiguous. Did the human decide, or did the system decide? If the decision produces adverse outcomes, the reviewer bears the consequence of a decision they did not meaningfully make. This ambiguity discourages active review and encourages passive confirmation. The governance architecture assumes shared accountability. The operational reality produces accountability diffusion.

Institutional trust inflation. Organizations that deploy AI systems develop institutional narratives about system reliability. These narratives often exceed empirical justification. When organizational leadership describes AI systems as “highly accurate” or “validated,” reviewers receive implicit instruction to trust output rather than scrutinize it. Institutional trust substitutes for individual judgment — not because reviewers are weak, but because institutional signals are structurally powerful.

The number remains. The meaning changes. A review process that endorses 99 percent of AI output may indicate excellent system performance — or it may indicate that review authority has collapsed. Without governance architecture that distinguishes between these conditions, the organization cannot tell the difference.

Organizational Failure Patterns

Common structural failures in automation bias governance follow predictable patterns:

- Review as compliance theater. Organizations implement review processes to satisfy governance requirements without providing the conditions necessary for meaningful review. The process exists. The authority does not. Regulators observe a review step. The review step produces no independent judgment.

- Override suppression. Organizations that track override rates without contextual analysis create implicit pressure to minimize overrides. Reviewers who override frequently appear to be “disagreeing with the system” rather than “exercising governance authority.” Without institutional protection for override behavior, reviewers learn that agreement is professionally safer than judgment.

- Threshold delegation. Organizations delegate threshold selection to technical teams during deployment and treat those thresholds as governance decisions. When operational conditions change, threshold adjustment requires governance authority that no one has been assigned. The threshold persists because no one has the authority — or the incentive — to change it.

- Expertise concentration failure. Organizations assign AI review to personnel without the domain expertise necessary to evaluate output independently. The reviewer can verify that the system produced a score. The reviewer cannot evaluate whether the score is structurally justified. Review without evaluative capacity is a governance artifact, not a governance control.

- Escalation path absence. Reviewers who encounter ambiguous or concerning AI output have no defined path for escalation. Ambiguity resolves through individual judgment without governance support — or, more commonly, resolves through deference to the system’s recommendation. The absence of escalation architecture is not a gap. It is a structural default toward automation bias.

These are governance failures — not tooling failures. The AI systems perform as designed. The organizational structures that should interpret, validate, and gate system output are absent, insufficient, or operationally undermined.

AI Security Implications

Automation bias creates structured AI Security exposure:

- Adversarial exploitation of review gaps. When adversaries understand that human review is perfunctory, they can engineer inputs that produce favorable AI output with confidence that the output will not receive meaningful scrutiny. The review process becomes a known constraint that adversarial actors engineer around — not a barrier they must overcome.

- Alert fatigue as attack surface. AI security monitoring systems that generate high volumes of alerts create the same volume-capacity mismatch that drives automation bias in operational workflows. Analysts defer to system classifications. True threats that produce ambiguous alerts receive insufficient scrutiny. False negatives compound. The monitoring system generates data. The organizational response generates deference.

- Social engineering amplification. AI-generated recommendations carry institutional authority that human-generated recommendations do not. Adversaries who can influence AI input to produce favorable output leverage automation bias as an amplification mechanism — the AI recommends, the human confirms, the organization acts. The attack surface is not the AI system. It is the governance gap between AI output and human action.

- Override suppression as vulnerability. Organizations that suppress override behavior eliminate the error-correction mechanism that review processes are designed to provide. When reviewers cannot meaningfully disagree with AI output, the organization operates without a structural safeguard against systematic AI error — whether that error originates from model degradation, distribution shift, or adversarial manipulation.

From an AI Security perspective, automation bias does not merely degrade decision quality. It eliminates the organizational capacity to detect that decision quality has degraded. The system continues to produce output. The organization continues to act on that output. The feedback loop that would signal degradation has been structurally severed.

Compliance and Accountability Architecture

Automation bias carries specific AI Compliance obligations that extend beyond procedural documentation:

Meaningful review requirements. Regulatory frameworks increasingly require not merely that human review exists, but that human review is meaningful — that reviewers have the authority, capacity, and information necessary to exercise independent judgment. Compliance that documents a review step without validating review quality satisfies neither the intent nor the operational requirements of accountability frameworks.

Accountability assignment under automation. When AI output drives institutional decisions, accountability for those decisions must be clearly assigned. Accountability that resides with “the reviewer” is structurally incoherent when the reviewer lacks the authority, capacity, or information to exercise meaningful judgment. Compliance requires that accountability assignment reflects operational reality — not organizational aspiration.

Override documentation and protection. Compliance architecture must document not only when reviewers confirm AI output, but when — and why — they override it. Override rates that approach zero require governance investigation, not governance satisfaction. Compliance that treats low override rates as evidence of system accuracy may be documenting governance failure as governance success.

Proportionality in automated decision-making. Organizational actions driven by AI output must be proportional to the governance infrastructure supporting those decisions. High-consequence decisions require correspondingly high-quality review processes. Proportionality is not satisfied by assigning the same review process to all decision categories regardless of consequence.

Compliance is operational enforceability — not documentation. Documentation that describes review authority which does not exist operationally satisfies neither regulatory expectations nor institutional accountability requirements.

Production Environment Reality

Automation bias behavior in production environments diverges systematically from behavior in design and testing environments:

Volume scaling. Design environments model review processes at manageable volumes. Production environments operate at volumes that exceed design assumptions within months of deployment. Review processes designed for hundreds of daily decisions encounter thousands. The review architecture that functioned in pilot degrades structurally at production scale.

Workflow integration pressure. Production AI output feeds downstream processes — procurement decisions, compliance reporting, customer interactions, risk assessments. Each integration creates time pressure on review. Downstream systems expect input at computational speed. Review operates at human speed. Integration pressure compresses review time, which accelerates deference.

Model version transitions. Production AI systems undergo model updates that alter output characteristics — score distributions shift, classification boundaries change, confidence calibrations reset. Reviewers trained on previous model behavior apply outdated expectations to new model output. The review capacity that existed for the previous model version does not automatically transfer to the updated version.

Monitoring obligation drift. Initial deployment includes monitoring commitments — override rate tracking, review quality audits, reviewer feedback mechanisms. Production pressure erodes these commitments over time. Monitoring becomes periodic rather than continuous. Quality audits become annual rather than quarterly. Feedback mechanisms become administrative rather than actionable.

Hybrid workflow complexity. Production environments frequently combine fully automated decisions, AI-assisted human decisions, and purely human decisions within the same operational workflow. The boundaries between these categories blur under production pressure. Decisions that should receive human review migrate into the fully automated pathway because the volume of decisions requiring review exceeds available capacity.

Structural Mitigation Framework

Addressing automation bias requires governance architecture that accounts for the structural conditions enabling deference — not merely training interventions that instruct reviewers to “think critically.” The following framework defines minimum governance requirements:

Governance Architecture for Review Authority in Automated Systems

- Define override authority before deployment. Specify who has the authority to reject AI output, under what conditions, with what documentation, and with what institutional protection. Override authority that exists only in policy — without operational support and professional protection — is not governance.

- Ensure review capacity is proportional to output volume. Review processes must be resourced to handle production volumes at the depth necessary for meaningful evaluation. When volume exceeds capacity, governance must define which decisions receive full review, which receive abbreviated review, and which proceed without review — with explicit accountability for that allocation.

- Monitor override rates as governance health indicators. Declining override rates must trigger governance investigation, not governance satisfaction. Override rate monitoring must distinguish between decreasing overrides due to improving system performance and decreasing overrides due to degrading review authority.

- Provide contextual information alongside AI output. Reviewers must receive the evidence, alternatives, and confidence indicators necessary to evaluate output independently. Output presented without context produces ratification, not review.

- Design escalation paths for ambiguous output. Define explicit procedures for cases where AI output is uncertain, contradictory, or inconsistent with domain knowledge. Ambiguity must resolve through structured governance — not through individual deference to system output.

- Protect override behavior institutionally. Reviewers who exercise override authority must be protected from implicit professional consequences. Organizations that penalize disagreement with AI output — through performance metrics, workload allocation, or institutional signaling — structurally guarantee automation bias.

- Assign suspension authority. The authority to suspend automated decision processes when review quality degrades must be predefined, assigned, and executable without organizational delay.

- Document review quality, not only review occurrence. Governance documentation must capture whether review was meaningful — not merely whether review occurred. Time-stamped confirmation without evidence of evaluative engagement does not constitute governance documentation.

- Conduct periodic review authority audits. Assess whether reviewers retain the expertise, capacity, authority, and institutional support necessary for meaningful evaluation. Review authority that existed at deployment may not persist at production scale.

This framework does not eliminate automation bias. It prevents automation bias from becoming institutional failure.

Automation Bias as Governance Architecture

Automation bias is predictable. It is structural. It is the expected outcome when organizations place humans inside decision workflows without defining their authority to act independently within those workflows.

The failure is not that humans trust AI systems. The failure is that organizations design review processes that make trust the path of least resistance — and make independent judgment the path of greatest organizational friction.

The human remains in the loop.

The judgment does not.

The distinction is architectural — not psychological.

Automation bias is not a training problem. It is a governance architecture problem.

Without governance, review becomes endorsement. With governance, review remains judgment.

Governance does not eliminate deference. It prevents deference from becoming institutional failure.

Related: The Confidence Illusion in AI Risk Scoring Systems · What Text Detection Confidence Actually Means