The AI Trap: When Technical Leverage Outpaces Structural Control

AI systems do not fail because the technology is inadequate. They fail because organizations deploy technical capabilities that exceed their structural capacity to govern.

A classification model produces outputs at a rate no human team can meaningfully review. A risk scoring system generates assessments that migrate into operational policy without governance gates. A multi-agent orchestration platform delegates decisions across autonomous components with no single point of accountability. A production model drifts from its deployment baseline while monitoring frameworks evaluate metrics that no longer reflect system behavior.

Each of these conditions is individually documented across governance literature. Each has been analyzed as a discrete problem — a model risk issue, an oversight gap, a compliance challenge, a security vulnerability.

This briefing does not treat them as discrete problems. It treats them as structural manifestations of a single underlying condition: the asymmetry between technical leverage and institutional control.

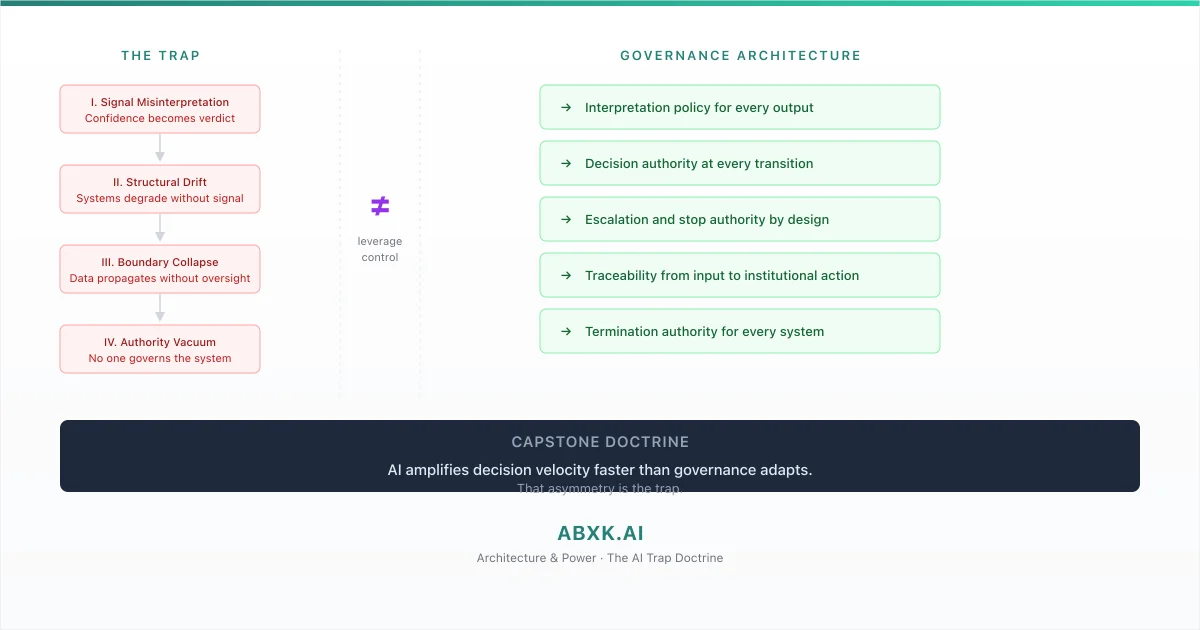

AI amplifies decision velocity faster than governance adapts. That asymmetry is the trap.

The trap does not spring when AI systems malfunction. It springs when they function exactly as designed — producing decisions at scale, at speed, and with apparent precision — while the organization lacks the governance architecture to interpret those decisions, review them, override them, or terminate the systems that produce them.

AI Governance exists to close that asymmetry — to ensure that institutional control keeps pace with technical capability. Without governance architecture, AI Risk Management addresses individual risks while systemic risk compounds. AI Security protects technical surfaces while structural surfaces remain exposed. AI Compliance documents processes while operational enforceability remains absent.

The AI Trap is not a technology problem. It is a governance architecture problem. This doctrine examines its structural anatomy.

The Four Pillars of Structural Failure

The AI Trap manifests through four interdependent failure modes. Each pillar represents a category of structural risk that operates independently but compounds in combination. Understanding the trap requires understanding all four — and recognizing that they do not occur in isolation.

Pillar I: Signal Misinterpretation. AI systems produce probabilistic outputs — confidence scores, risk assessments, classification probabilities. These outputs are statistical estimates, conditional on model calibration, training distribution, and operational context. When organizations consume these outputs as verdicts rather than signals — treating a confidence score as a certainty, a risk assessment as an objective fact, a classification as a determination — they convert statistical uncertainty into institutional certainty. The decision layer fails not because the output was wrong but because the output was misinterpreted. Confidence becomes verdict. The number remains. The meaning changes.

Pillar II: Structural Drift in Production. AI systems deployed into production environments change over time — through data distribution shift, model degradation, calibration drift, and operational context evolution. These changes occur without visible signal. The system continues to produce outputs. The outputs continue to look normal. The statistical foundation beneath those outputs erodes. Organizations that deploy without continuous governance — without monitoring authority, drift detection, and revalidation architecture — operate systems whose behavior no longer reflects the conditions under which they were approved. The system remains. The foundation shifts.

Pillar III: Data and Boundary Collapse. AI systems consume, process, and propagate data across boundaries that were not designed for algorithmic processing. Training data carries lineage that governance frameworks cannot trace. Vendor defaults configure data handling without organizational authority. System outputs cross organizational boundaries and become inputs to other systems without governance review. Data boundaries that were designed for human-scale processing dissolve under AI-scale operation. The data moves. The governance does not follow.

Pillar IV: Architecture and Power. AI systems make decisions. Organizations deploy them. But the structural question — who has the authority to govern what these systems decide, who may override them, who may terminate them, and who is accountable when they fail — remains architecturally undefined. Decision authority is assumed from organizational hierarchy rather than designed into governance architecture. Decision flows cascade through multi-agent systems without gates. Human reviewers are positioned as control mechanisms without the structural capability to intervene. Authority exists on paper. Control does not exist in practice.

Each pillar creates risk independently. Together, they create the trap — a condition in which the organization’s AI systems operate beyond the reach of its governance architecture.

The Velocity Asymmetry

The structural mechanism of the AI Trap is velocity asymmetry — the widening gap between the speed at which AI systems produce decisions and the speed at which organizations can govern them.

AI decision velocity increases with each deployment. A new model enters production. It generates outputs. Those outputs feed downstream systems. The downstream systems trigger actions. The action chain executes at computational speed. Each new AI capability adds decision volume and decision speed to the organization’s operational surface.

Governance velocity does not increase proportionally. Governance operates through human judgment, organizational process, and institutional review. These mechanisms operate at biological and organizational speed — orders of magnitude slower than computational speed. Adding a new AI capability requires governance to expand its scope — new interpretation policies, new threshold architectures, new authority assignments, new escalation paths, new monitoring frameworks. Each expansion requires design, review, approval, implementation, and validation. The governance expansion cycle is measured in months. The AI deployment cycle is measured in days.

The asymmetry compounds. Each deployment widens the gap between what the organization’s systems can do and what the organization can structurally govern. The first deployment creates a manageable gap. The tenth creates a significant one. By the twentieth, the organization operates a portfolio of AI systems whose collective decision surface exceeds its governance architecture’s coverage by a factor that no incremental policy update can close.

This is not a temporary condition that resolves with maturity. It is a structural dynamic that intensifies with capability. More capable AI systems produce more decisions, at higher speed, across more domains. Governance must expand faster to keep pace. It cannot — because governance requires human judgment, and human judgment does not scale at computational rates.

The asymmetry is the trap. Organizations do not fall into it by deploying bad AI systems. They fall into it by deploying good ones — systems that work as designed, at the speed they were designed for, producing the volume they were designed to produce — without building the governance architecture required to match.

How the Pillars Compound

The four pillars do not operate in isolation. They interact in ways that amplify each pillar’s individual risk into systemic institutional exposure.

Signal misinterpretation feeds structural drift. When an organization treats AI outputs as verdicts, it loses the interpretive discipline required to detect drift. A model that drifts produces outputs that still look like verdicts — the confidence score still appears precise, the risk assessment still appears definitive. The misinterpretation masks the drift. The organization continues to act on outputs whose statistical foundation has eroded because it never developed the governance architecture to distinguish between reliable signals and degraded ones.

Structural drift enables boundary collapse. A model that has drifted from its deployment baseline may produce outputs that cross data boundaries in ways the original design did not anticipate. A classification that shifts under distribution change may now apply to data categories it was not designed to process. A recommendation that changes under calibration drift may now influence operational domains it was not intended to reach. The drift creates boundary violations that no one detects because no one monitors for drift-induced boundary effects.

Boundary collapse amplifies the authority vacuum. When data and decisions propagate across systems without governance review, the question of who has authority over those decisions becomes structurally unanswerable. The decision originated in one system. It propagated through three others. It produced an action in a fifth. No single authority governs the full chain. The boundary collapse distributes the decision across governance domains that were designed independently and connected operationally without governance integration.

The authority vacuum prevents effective response to signal misinterpretation, structural drift, and boundary collapse. When no one has defined authority to override AI outputs, to halt drifting systems, or to enforce data boundaries, the organization detects problems it cannot address. Monitoring reports drift. No one has the authority to suspend the drifting model. Audit identifies boundary violations. No one has the authority to enforce boundaries across system integrations. Review reveals misinterpreted outputs. No one has the authority to change interpretation policy at operational speed.

The pillars compound into the trap: the organization has deployed AI capabilities that produce decisions across its operational surface, and the governance architecture required to interpret, review, override, and terminate those decisions does not exist at the speed, scope, or authority level required to control them.

Why Traditional Governance Does Not Close the Gap

Organizations respond to AI governance challenges by extending traditional governance mechanisms — risk registers, policy frameworks, compliance documentation, committee oversight. These mechanisms were designed for human decision processes and do not structurally address the velocity asymmetry that defines the AI Trap.

Risk registers catalog individual system risks. They do not capture cross-system risk — the compounding dynamics that emerge when multiple AI systems interact, when outputs cascade through decision flows, when boundary violations connect previously isolated risk domains. The register lists risks. The trap operates between them.

Policy frameworks define rules for AI deployment and operation. They operate at policy speed — updated annually, reviewed quarterly, applied through manual compliance processes. AI systems operate at computational speed — producing decisions continuously, evolving through drift daily, crossing boundaries with each transaction. The policy is static. The system is dynamic. The gap between them is the governance failure.

Compliance documentation records what the organization intends to do. It does not enforce what the organization actually does. A compliance framework that documents interpretation policies, threshold architectures, and escalation paths creates a governance description. It does not create governance capability. Compliance that cannot be operationally enforced at the speed of system operation is documentation, not control.

Committee oversight operates at organizational cadence — monthly meetings, quarterly reviews, annual assessments. AI systems produce consequential decisions between committee meetings. The committee reviews historical performance. The system has already evolved. The committee’s oversight applies to a system state that no longer exists.

Traditional governance closes the gap only if it is redesigned as governance architecture — structural mechanisms that operate at the speed, scope, and authority level that AI systems require. Without that redesign, traditional governance addresses the trap’s symptoms while the trap deepens.

Governance Architecture as the Structural Response

The AI Trap does not close through better AI systems, better models, or better algorithms. It closes through governance architecture — structural design that ensures institutional control keeps pace with technical capability.

Interpretation architecture. Every AI output must have a defined interpretation policy — specifying what the output means, what it does not mean, how it should be used, and what governance constraints apply to its use. Interpretation that is left to operational users without structural guidance produces misinterpretation at scale.

Threshold architecture. Every decision threshold must be governed — defined by a named authority, documented with rationale, reviewed on a defined schedule, and modified through a governed process. Thresholds that are set by engineering convenience and never reviewed produce governance erosion.

Decision authority architecture. Every AI decision point must have a named authority — an individual or role with explicit responsibility for what the system decides, under what constraints, and with what accountability. Authority that is assumed from hierarchy rather than designed into architecture produces authority vacuums.

Escalation and stop architecture. Every AI system must have defined escalation triggers, escalation paths, and stop authority. Escalation that depends on individual initiative rather than structural design fails under production pressure. Stop authority that requires consensus rather than individual exercise fails at operational speed.

Monitoring architecture. Monitoring must operate at the governance level, not just the performance level — tracking drift, boundary violations, authority exercises, escalation patterns, and decision flow health. Performance monitoring that reports model accuracy without governance context produces awareness without control.

Termination architecture. Every AI system must have defined termination criteria and named termination authority. A system that cannot be terminated is infrastructure without governance. Termination authority that exists in policy but cannot be exercised operationally is ceremonial.

Traceability architecture. Every decision chain — from AI input through intermediate processing to institutional action — must be traceable. Traceability that captures individual model events but not cross-system decision flows cannot support governance at the system level.

Recalibration architecture. Governance itself must be subject to continuous recalibration — evaluating whether governance mechanisms remain effective as AI capabilities evolve, as organizational context changes, and as the velocity asymmetry widens. Governance that remains static while AI capabilities grow is governance that falls behind.

This framework does not eliminate the velocity asymmetry. It prevents the velocity asymmetry from becoming institutional failure.

Doctrine Closing

The AI Trap is not a technology failure. It is a governance architecture failure.

Organizations do not fall into the trap because their AI systems are flawed. They fall into it because their governance architecture was designed for a decision velocity that no longer exists. AI systems produce decisions at computational speed. Governance operates at organizational speed. The gap between them is where institutional risk compounds — where signals are misinterpreted, where systems drift undetected, where boundaries collapse without oversight, and where authority vacuums prevent effective response.

The trap does not close through caution, through slower deployment, or through avoiding AI systems altogether. It closes through governance architecture — structural design that ensures every output has an interpretation policy, every transition has a decision authority, every escalation has a path, every system has termination authority, and every decision chain has traceability.

AI amplifies decision velocity faster than governance adapts. That asymmetry is the trap. Governance architecture is the structural response. Not to make AI systems perfect. To make their failures governable.