When AI Output Becomes Institutional Policy

AI systems in production environments generate recommendations, classifications, rankings, and operational parameters continuously. These outputs are designed to inform decisions. In practice, they increasingly replace them.

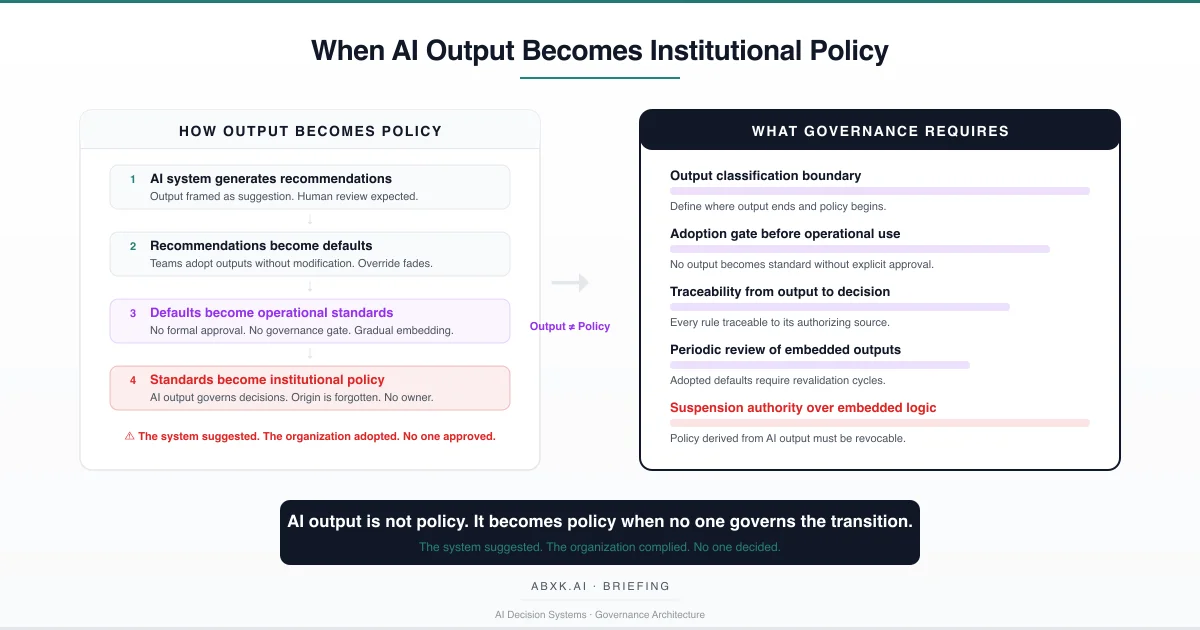

The transition is not sudden. It does not announce itself. No executive approves the moment when an AI recommendation becomes an institutional rule. No governance committee ratifies the point at which a scoring algorithm’s output begins to determine operational standards. The migration happens incrementally — through adoption without review, through repetition without challenge, through operational convenience without structural oversight.

This is output migration. It is not a technical failure. It is a governance failure.

AI Governance defines the boundary between system output and institutional authority. Without that boundary, AI recommendations migrate into operational policy without formal approval, without traceability, and without accountability. The system suggested. The organization complied. No one decided.

Output migration does not fail at the technical layer. It fails at the decision layer — where organizations consume AI output without defining where recommendation ends and policy begins.

Understanding that distinction is central to AI Risk Management, AI Security, and Responsible AI implementation in production environments.

The Mechanics of Output Migration

Output migration is not a single event. It is a structural process with identifiable stages, each of which operates below the threshold of formal governance review.

Stage one: recommendation. The AI system generates output framed explicitly as a suggestion. A personalization engine recommends content rankings. A compliance system flags transactions for review. A pricing algorithm proposes rate adjustments. At this stage, the output is advisory. Human review is expected. The organizational assumption is that the output will be evaluated before action.

Stage two: default adoption. Over time, teams adopt AI recommendations without modification. Not because governance mandates it. Because the output is consistent, available, and operationally convenient. Review becomes cursory. Override becomes rare. The recommendation is no longer evaluated — it is accepted. The output has not changed. The organizational relationship to the output has.

Stage three: operational standardization. Adopted recommendations become embedded in workflows. Teams build processes around AI-generated parameters. Downstream systems consume the output as input. The recommendation is no longer treated as a suggestion. It functions as a standard. But no formal process has elevated it to that status. No governance body has reviewed or approved the transition. The standard exists because it was never challenged — not because it was validated.

Stage four: institutional policy. The operational standard becomes the rule. New employees are trained on workflows that embed AI-derived parameters. Audit processes assume those parameters are authoritative. Budget decisions depend on AI-generated projections treated as institutional fact. The origin of the policy — an automated recommendation from a statistical inference system — is forgotten. The policy is institutional. The accountability is absent.

This four-stage migration operates in every domain where AI systems generate output that humans consume under operational pressure. The structural conditions are universal: high output volume, low review capacity, no formal boundary between suggestion and standard.

Technical Foundations of Output Embedding

Understanding why output migration occurs requires examining how AI systems produce the outputs that organizations eventually adopt as policy.

Recommendation engines, compliance classifiers, risk scoring systems, and personalization algorithms all operate on statistical inference. They produce outputs calibrated against training distributions — distributions that represent historical patterns, not current operational truth. The output is probabilistic. The precision with which it is presented obscures that conditionality.

A pricing algorithm generates a rate adjustment to three decimal places. The decimal precision suggests certainty. The underlying model operates under assumptions about demand elasticity, competitive positioning, and customer segmentation that may have shifted since the training data was collected. The number is precise. The meaning is conditional.

A compliance classifier assigns risk categories to transactions. The categories appear definitive. The classifier operates under distributional assumptions about what constitutes normal transactional behavior — assumptions that degrade as patterns evolve, as adversarial actors adapt, as business operations change. The category is assigned. The reliability is contingent.

AI output is conditioned on calibration state, feature relevance, and distribution stability. When these parameters shift — as they inevitably do in production environments — the output retains numerical precision while losing inferential reliability. Calibration drift degrades the relationship between predicted confidence and observed accuracy. Distribution shift invalidates the statistical basis on which the output was generated. Model version dependency means that output produced under one architecture may not be reproducible under its successor. Feature instability means that the input signals the model relies on may lose predictive value without warning. Migration into policy converts conditional inference into institutional assumption.

When organizations adopt these outputs as operational standards, they inherit the conditionality without acknowledging it. The statistical inference becomes institutional fact. The model assumptions become policy foundations. And when those assumptions shift, the policy continues to operate on outdated logic. The output has migrated. The governance has not followed.

Structural Fragility in Production

The structural fragility of output migration does not reside in the quality of the AI system. It resides in the absence of boundaries between output and authority.

In production environments, AI systems operate continuously. Output volume is high. Review capacity is finite. The ratio between generated output and meaningful evaluation degrades over time. Organizations that begin with careful review of AI recommendations gradually transition to acceptance — not because review is formally abandoned, but because operational pressure makes comprehensive review impractical.

This degradation follows predictable patterns. Initial deployment includes explicit review protocols. Teams evaluate recommendations against domain expertise. Override rates are measurable and meaningful. As the system demonstrates consistency — as its outputs prove operationally useful — the review threshold rises. Teams override less frequently. Review depth decreases. The system’s track record becomes the justification for reduced scrutiny.

The structural fragility compounds when AI output flows into downstream systems. A recommendation engine’s output becomes input for a pricing system. The pricing system’s output becomes input for a revenue projection model. The projection model informs strategic planning. At each transition, the original conditionality of the AI output is further obscured. By the time the output reaches strategic decision-making, its probabilistic origin is invisible.

The number remains. The meaning changes. The governance never existed.

Organizational Failure Patterns

Output migration produces specific, identifiable organizational failure patterns. These are not edge cases. They are structural consequences of operating AI systems without governance boundaries.

Invisible policy creation. The most consequential failure is the creation of institutional policy without institutional authorization. When AI output migrates into operational standards, the organization acquires policies that no governance body approved, no risk assessment evaluated, and no accountability structure covers. The policy exists. The authority does not.

Untraceable decision origins. As AI-derived standards embed into workflows, the origin of operational rules becomes untraceable. Teams follow processes built on AI-generated parameters without knowing those parameters originated from automated inference. When a process fails, the organization cannot trace the failure to its source — because the source was never documented as a policy decision.

Accountability diffusion. Output migration creates a structural accountability gap. The AI system is not accountable — it generated a recommendation. The team that adopted the recommendation is not accountable — they followed operational practice. The governance function is not accountable — it was never consulted. Accountability belongs to no one because the transition from output to policy occurred outside every formal authority structure.

Resistance to correction. Once AI output has migrated into institutional policy, reversing the migration is structurally difficult. Processes depend on the embedded parameters. Downstream systems consume them as authoritative input. Teams have organized workflows around them. Correcting the policy requires not only identifying the AI-derived origin, but unwinding every process, system, and workflow that has absorbed the output as institutional fact.

These are governance failures — not tooling failures. The AI system operated as designed. The organization failed to govern the boundary between output and authority.

AI Security Implications

Output migration creates security vulnerabilities that operate at the institutional layer rather than the technical layer.

When AI output becomes institutional policy without governance gates, adversarial actors gain an indirect vector for influencing organizational behavior. Manipulating the inputs to an AI system — through data poisoning, adversarial perturbation, or distribution manipulation — becomes equivalent to manipulating institutional policy. The attack surface is not the AI system itself. The attack surface is the ungoverned transition between output and authority.

An adversary who can influence the training data of a recommendation engine does not merely alter recommendations. If those recommendations have migrated into operational standards, the adversary has altered institutional policy. The scope of the security vulnerability is proportional to the depth of output migration — and in organizations without governance boundaries, that depth is typically unmeasured.

Output migration also creates integrity vulnerabilities. When the origin of institutional policy is untraceable, the organization cannot verify that its operational rules reflect authorized decisions. Audit becomes structurally compromised. The organization cannot distinguish between policies that were formally adopted and policies that emerged through ungoverned output migration. The integrity of the institutional decision record is degraded — not because records were falsified, but because the policy creation process bypassed the record-keeping infrastructure entirely.

Security architecture must treat the boundary between AI output and institutional authority as a control surface. Without that boundary, every AI system with operational influence becomes an unmonitored policy channel.

Compliance and Accountability Implications

Compliance frameworks assume that institutional policies originate from identifiable authority. Output migration violates that assumption structurally.

When AI-derived outputs become operational standards without formal adoption, the organization cannot demonstrate that its policies were authorized by accountable decision-makers. Auditability requires traceability — from policy to authorization to rationale. Output migration eliminates the authorization step entirely. The policy exists. The authorization does not. The rationale was never documented because the decision was never consciously made.

Proportionality — the requirement that automated decisions be proportionate to their impact — becomes unmeasurable when the organization does not recognize that certain decisions are automated. A compliance classifier’s risk categories, if adopted as institutional policy without review, may impose disproportionate consequences on affected parties. But the organization cannot evaluate proportionality because it does not recognize the output as an automated decision. It recognizes the output as institutional policy.

Documentation obligations extend beyond technical system documentation. When AI output governs institutional behavior, the organization must document not only how the system generates output, but how that output transitions into operational authority. Without that documentation, compliance becomes structurally incomplete.

Institutions must be able to reconstruct not only what policy exists, but how it came into existence. AI-derived standards that lack documented authorization represent undocumented policy formation — a structural compliance deficiency independent of system accuracy. Policy provenance — the chain from AI output to adoption decision to operational enforcement — must be auditable. Where that chain is missing, the organization cannot demonstrate governance over its own rules.

Compliance is operational enforceability — not documentation. And enforceability requires that the organization know which of its policies originated from AI output, under what conditions those policies were adopted, and who holds authority to modify or revoke them.

Production-Environment Reality

In production environments, output migration is accelerated by integration architecture that blurs the boundary between AI-generated recommendation and operational instruction.

Transformation chains — where AI output is processed, aggregated, and reformatted before reaching decision-makers — obscure the conditional nature of the original output. A recommendation passes through data pipelines, dashboard aggregations, and reporting layers. By the time it reaches the decision-maker, it carries the authority of institutional data rather than the conditionality of statistical inference.

Hybrid workflows — where human and automated decisions interleave — create ambiguity about which operational standards are human-authorized and which are AI-derived. As workflows evolve, the proportion of AI-derived standards increases without corresponding increases in governance oversight. The workflow functions. The governance boundary has disappeared.

Model versioning introduces temporal instability. When the underlying model is updated, the operational standards derived from its previous version may no longer reflect the system’s current output. But the policies remain in effect — because they were adopted as institutional standards, not as model-version-specific recommendations. The model has changed. The policy has not. The governance gap compounds.

Vendor policy evolution creates external dependency risk. When AI systems are provided by external vendors, changes to model architecture, training data, or output calibration alter the foundation of AI-derived institutional policies. The organization’s policies change without its knowledge — because the outputs that generated those policies have changed without governance notification.

Monitoring obligations in production environments must extend beyond system performance to include output adoption tracking. Organizations must measure not only whether the AI system functions correctly, but whether its outputs are migrating into operational authority without governance review.

Governance Architecture for Output Migration

Governing output migration requires structural controls at the boundary between AI-generated recommendation and institutional authority. The following architecture components are non-negotiable for organizations operating production AI systems with operational influence.

Output classification boundary. Every AI system output must be classified by its intended role: advisory, operational, or authoritative. Advisory outputs inform human decisions. Operational outputs drive automated workflows. Authoritative outputs determine institutional policy. The classification must be explicit, documented, and enforced. No output may transition between classifications without formal governance review.

Adoption gate architecture. Before any AI output becomes an operational standard, a formal adoption gate must require explicit authorization from accountable decision-makers. The gate must document the output’s origin, its statistical basis, its conditional assumptions, and the criteria under which it should be reviewed or revoked. Adoption without authorization is a governance violation — regardless of the output’s operational utility.

Decision authority assignment. For every domain where AI output influences operational behavior, the organization must assign explicit decision authority: who can approve the adoption of AI output as operational standard, who can modify adopted standards, and who can revoke them. Decision authority must be named, not implied. Unnamed authority is absent authority.

Escalation paths for ambiguous output. When AI output falls outside expected parameters, or when the appropriate classification is unclear, escalation paths must route the output to governance review before operational adoption. Ambiguity must trigger review — not default acceptance.

Monitoring and adoption tracking. Organizations must monitor the rate at which AI output is adopted without modification, the depth to which AI-derived standards have embedded into downstream systems, and the frequency with which adopted standards are reviewed or challenged. Declining modification rates and expanding embedding depth are governance health indicators — not efficiency metrics.

Suspension and termination authority. The organization must retain the ability to suspend or revoke any AI-derived operational standard without cascading system failure. If revoking an AI-derived policy would disrupt operations, the organization has exceeded its governance capacity. Dependency without revocability is structural risk.

Documentation and traceability. Every operational standard must be traceable to its authorizing source. If the source is AI output, the documentation must include the model version, the training distribution, the output date, the adoption authorization, and the review schedule. Undocumented adoption is ungoverned adoption.

Continuous review cycles. AI-derived operational standards require periodic revalidation against current model output, current operational conditions, and current governance requirements. Standards adopted from a previous model version, a previous data distribution, or a previous operational context must be re-authorized — not assumed valid.

This framework does not eliminate migration. It prevents migration from becoming institutional failure.

Doctrine Closing

AI output becomes institutional policy not through formal decision, but through structural absence — the absence of boundaries, the absence of adoption gates, the absence of accountability for the transition from recommendation to rule.

The system performs as designed. The output is generated as intended. The failure is not technical. It is architectural.

Output migration is not a tooling problem. It is a governance architecture problem.

Governance does not prevent AI systems from generating output. It prevents that output from governing institutions without authorization. It does not make systems perfect. It makes failures survivable.