AI Lifecycle Without Termination Authority

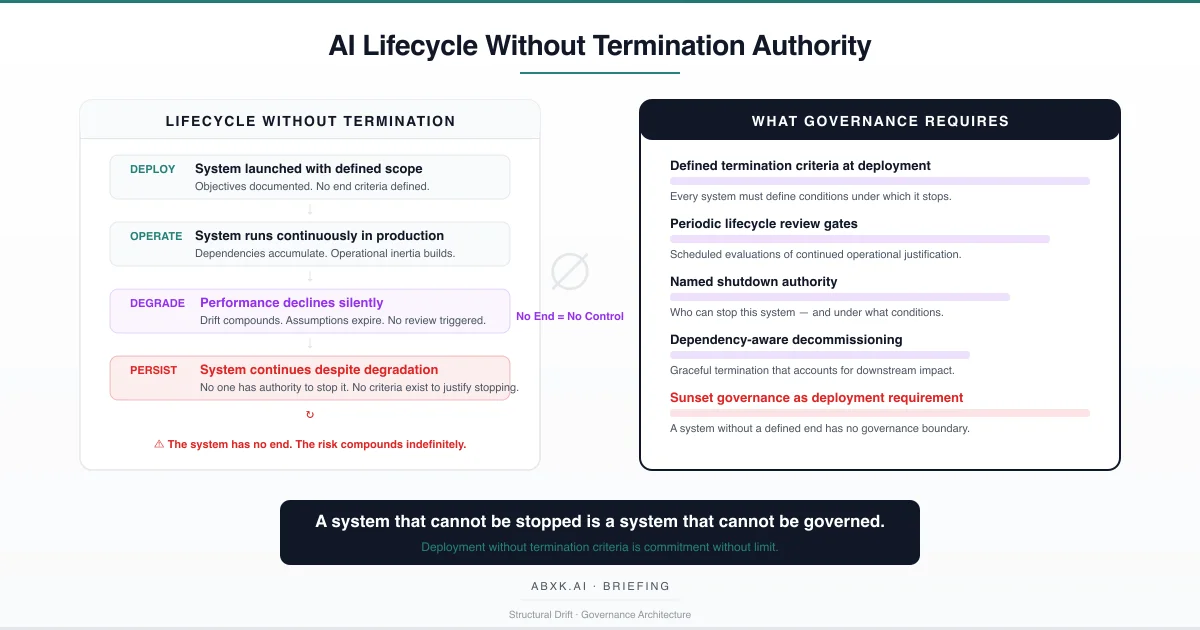

AI systems are deployed with launch criteria. They are rarely deployed with end criteria. Organizations define what a system must achieve to be approved for production. They do not define what a system must fail to achieve — or what conditions must change — for production to be revoked.

This asymmetry is not an oversight. It is a structural pattern. Deployment is an event with defined gates, authorization requirements, and accountability. Termination is undefined — an open question that no governance framework addresses, no lifecycle architecture mandates, and no decision authority owns.

The result is systems that persist beyond their operational validity. Models that have drifted beyond their calibration boundary continue to operate because no threshold triggers review. Systems whose original business justification has expired continue to run because no lifecycle gate requires revalidation. Infrastructure that was deployed for a specific purpose accumulates dependencies and becomes permanent — not because it was validated for permanence, but because no one has the authority or the criteria to stop it.

This is not a technical failure. It is a governance failure.

AI Governance defines how systems operate under accountability across their full lifecycle — including the conditions under which they end. Without termination authority, production AI systems operate on an indefinite timeline, accumulating structural risk that compounds with every cycle of unreviewed operation.

The AI lifecycle does not fail at the deployment layer. It fails at the termination layer — where no criteria exist, no authority is assigned, and no governance mechanism can end what was started.

Understanding that distinction is central to AI Risk Management, AI Security, and Responsible AI implementation in production environments.

The Technical Foundations of Indefinite Operation

AI systems are statistical inference machines. Their validity is conditioned on the assumptions under which they were built: the training distribution, the calibration state, the feature relevance, the operational context. These assumptions have a temporal boundary. They hold under the conditions that existed when the system was developed and validated. They do not hold indefinitely.

Calibration drift degrades the relationship between predicted confidence and observed accuracy over time. A model that was well-calibrated at deployment becomes progressively miscalibrated as the operating environment evolves. Distribution shift alters the statistical basis on which the model was trained — input patterns change, outcome frequencies shift, correlational structures weaken or invert. Feature instability means that the input signals the model relies on may lose predictive relevance without generating a detectable alert.

These degradation processes are not exceptional. They are structural properties of inference systems operating in non-stationary environments. Every model deployed into production begins to degrade from the moment it encounters operational data. The degradation is continuous, cumulative, and — absent monitoring — invisible.

A system with defined termination criteria can be evaluated against those criteria. A system without termination criteria cannot be evaluated for termination — because no standard exists against which to measure the decision. The system continues not because it has been validated for continued operation, but because no governance mechanism requires the validation.

The output remains precise. The calibration has shifted. The distribution has changed. The features have drifted. The system persists. The governance gap compounds with time.

Structural Fragility of Indefinite Systems

The structural fragility of systems without termination authority does not reside in their initial quality. It resides in the accumulation of ungoverned operational time.

Every cycle of operation without lifecycle review adds to the system’s structural risk. Dependencies accumulate as downstream systems consume the model’s output. Operational workflows integrate the system’s logic into institutional processes. Personnel turnover erodes the organizational knowledge of the system’s original assumptions, limitations, and intended scope. Documentation that was adequate at deployment becomes stale as the operational context evolves.

This accumulation follows a predictable pattern. In the first months of operation, the system’s assumptions remain largely valid. The deployment team retains institutional knowledge. Dependencies are limited. The system could be terminated with minimal disruption. Over time, each of these conditions degrades. Assumptions expire. Knowledge dissipates. Dependencies multiply. Termination cost increases.

The fragility is compounded by what might be called operational normalization: the organizational tendency to treat a running system as a validated system. If the system has been operating for two years without a reported failure, the organization interprets continuity as confirmation. It is not confirmation. It is the absence of evaluation. The system may have degraded significantly without producing a failure visible enough to trigger attention. The degradation is present. The evaluation has never been conducted.

A system that has never been evaluated for termination has never been validated for continuation. These are not equivalent.

Organizational Failure Patterns

Organizations that deploy AI systems without termination authority exhibit specific failure patterns that compound over the system’s operational lifetime.

Perpetual deployment default. The most fundamental failure is the absence of a termination decision point. Systems are deployed with go/no-go gates. They operate without continue/stop gates. The default state of a deployed system is perpetual operation — not because perpetual operation has been justified, but because no mechanism exists to require justification. The system runs because stopping requires a decision that no governance framework triggers.

Ownership erosion. Over time, the original team that deployed and understood the system moves on — through reorganization, attrition, or role changes. The system continues to operate, but the organizational knowledge of its assumptions, limitations, and intended scope dissipates. New operators inherit a running system without the context to evaluate its continued validity. The system has an operator but no owner. Operation is maintained. Accountability has vanished.

Dependency entanglement. As a system operates without lifecycle review, downstream consumers integrate its output into their own processes. Reports depend on its metrics. Workflows route through its decisions. Other models consume its output as input. Each dependency is created incrementally, without coordinated governance assessment. By the time the organization considers terminating the system, the dependency web is complex enough that termination would disrupt operations that no longer recognize their dependence on the original system.

Cost inversion. In a well-governed lifecycle, the cost of termination is lower than the cost of ungoverned continuation — because termination prevents the accumulation of structural risk. Without lifecycle governance, the relationship inverts. Termination becomes more expensive than continuation because dependencies, workflows, and institutional processes have been built on the system’s output. The organization continues operating a degraded system because stopping it is more disruptive than tolerating its degradation. The cost of governance failure becomes the justification for continued governance failure.

Risk invisibility. Systems without termination criteria do not generate governance signals. They do not trigger review. They do not surface degradation. They do not flag assumption expiration. The risk they represent is invisible — not because it does not exist, but because no governance mechanism is designed to detect it. The organization’s risk register reflects systems that have reported problems. It does not reflect systems that have never been evaluated.

These are governance failures — not tooling failures. The systems operated as deployed. The organization failed to define when operation should end.

AI Security Implications

Systems without termination authority create security exposure that compounds with operational duration.

A system that operates indefinitely on an aging codebase, an outdated model architecture, and a stale security posture becomes an expanding attack surface. Security vulnerabilities discovered after deployment may not be patched if the system lacks an active owner. Access control policies defined at deployment may not reflect the current organizational structure. Data handling assumptions established during development may not comply with current security requirements.

The security risk is not limited to the system itself. Systems without lifecycle governance propagate their security posture to every downstream consumer. A downstream system that depends on the output of an ungoverned upstream model inherits the upstream model’s security exposure — without visibility into the upstream model’s governance status. The dependency chain becomes a vulnerability chain.

Adversarial actors who identify systems operating without lifecycle governance gain a structural advantage. These systems are predictable — their behavior reflects training data and model architectures that have not been updated. Their defenses reflect security postures that have not been reassessed. And their institutional authority — the weight their output carries in organizational decisions — has not been adjusted to reflect their degraded state. The adversary exploits not the system’s technical weakness, but the organization’s governance gap.

Where AI output has migrated into institutional policy, termination failure becomes policy permanence without authorization. The system persists. The policy persists. The governance gap persists.

A system without termination authority is a system without a security lifecycle. Security is not a deployment property. It is a continuous obligation. And obligations that are never reviewed are obligations that are never met.

Compliance and Accountability Implications

Compliance frameworks require that automated systems operate under continuous governance. Systems without termination authority structurally violate that requirement.

A system operating beyond its validated parameters — through drift, assumption expiration, or contextual change — is operating outside its compliance boundary. The compliance documentation reflects the system’s state at deployment. The system’s actual state reflects years of ungoverned operation. The documentation is accurate for a system that no longer exists.

Auditability requires that organizations demonstrate that their production systems operate under current governance. A system that has never undergone lifecycle review cannot demonstrate current governance — because no review has been conducted. The audit trail shows deployment approval. It does not show continuation approval, because continuation was never a governed decision.

Proportionality requires that the scope and impact of automated decisions remain proportionate to the governance that governs them. A system that has accumulated dependencies and expanded its operational footprint beyond its original scope operates with disproportionate impact relative to its governance architecture — governance designed for initial deployment, not for the institutional reach the system has acquired through ungoverned expansion.

Accountability requires that someone is responsible for every operational system. Systems without lifecycle governance frequently have no accountable owner — the original team has moved on, the governance function was never notified, and the operations team maintains the system without authority to evaluate its continued validity. The system runs. The accountability does not exist.

Compliance is operational enforceability — not documentation. And enforceability requires that organizations can demonstrate continuous governance across a system’s full lifecycle, including the conditions under which the lifecycle ends.

Production-Environment Reality

In production environments, the absence of termination authority is accelerated by structural conditions that favor continuation over evaluation.

Integration pressure drives systems toward permanence. Once a system is integrated into production workflows, every downstream dependency creates pressure to maintain it. Termination requires not only stopping the system but managing the impact on every dependent process. Integration pressure converts temporary deployments into permanent infrastructure — not through governance authorization, but through operational inertia.

Transformation chains obscure the system’s role in institutional decisions. When a model’s output passes through data pipelines, aggregation layers, and reporting systems, its origin becomes invisible to the decision-makers who ultimately depend on it. Terminating a system that is invisible to its consumers requires organizational archaeology — tracing output through transformation chains to identify every downstream dependency.

Hybrid workflows blend human and automated decisions in ways that make the system’s contribution unrecognizable. If a system is terminated, the human elements of the hybrid workflow must absorb the automated function — but the workflow was not designed for that absorption. The hybrid architecture assumes the system’s continuous availability.

Model versioning creates the illusion of lifecycle governance. When a model is updated, the organization may interpret the update as lifecycle management. It is not. Model updating replaces one version with another. Lifecycle governance evaluates whether the system should continue operating at all. Version updates without lifecycle review perpetuate the system’s operation without evaluating its continued justification.

Vendor dependency introduces external lifecycle risk. Systems built on vendor-provided components inherit the vendor’s lifecycle decisions. If the vendor discontinues a component, the organization faces forced termination without governance preparation. If the vendor continues the component, the organization inherits the vendor’s implicit assumption that the component should persist — an assumption that may not align with the organization’s governance requirements.

Monitoring obligations for systems without termination authority must include lifecycle indicators: assumption validity tracking, dependency growth measurement, ownership continuity verification, and operational justification assessment. These are not performance metrics. They are governance health indicators for systems operating on indefinite timelines.

Governance Architecture for AI Lifecycle Termination

Governing the AI lifecycle requires structural controls that span from deployment through termination. The following architecture components represent the structural minimum for organizations operating production AI systems.

Termination criteria at deployment. Every system deployed into production must define the conditions under which it will be terminated. Termination criteria may include performance thresholds, assumption validity boundaries, business justification expiration, or maximum operational duration without lifecycle review. Criteria must be defined at deployment — not retroactively — because retroactive criteria are influenced by the operational inertia they are meant to govern.

Periodic lifecycle review gates. Every production system must undergo periodic lifecycle review — a structured evaluation of whether the system’s continued operation is justified by current performance, current assumptions, and current business requirements. Lifecycle review is not a performance review. It is a governance evaluation of whether the system should continue to exist. The review must include the explicit option of termination.

Named shutdown authority. For every production system, the organization must assign a named shutdown authority — a role or function with the explicit power to terminate the system. Shutdown authority includes the mandate to evaluate termination criteria, the competence to assess downstream impact, and the authorization to execute termination. A system without named shutdown authority is a system without governance capacity.

Escalation paths for lifecycle ambiguity. When lifecycle review produces ambiguous results — when the system is not clearly justified but not clearly failing — escalation paths must route the assessment to governance review. Ambiguity must trigger governance escalation, not operational default to continuation. A system that continues because the decision was ambiguous has been continued by default, not by authority.

Dependency-aware decommissioning. Termination of a production system must include a dependency impact assessment. Every downstream consumer of the system’s output must be identified, notified, and provided with a transition plan. Decommissioning without dependency awareness is operational disruption masquerading as governance action.

Sunset governance as deployment requirement. The governance architecture must require that every deployment includes a sunset plan — a defined process for evaluating, authorizing, and executing system termination. The sunset plan must be documented at deployment and reviewed at each lifecycle review gate. A system deployed without a sunset plan has been deployed without a governance boundary.

Documentation and traceability. Lifecycle records must document every review gate, every continuation decision, every termination assessment, and every decommissioning action. The organization must be able to reconstruct the governance history of any system — from deployment through termination — and demonstrate that continuation was an authorized decision at every lifecycle stage.

Continuous justification obligation. Systems must not operate under the assumption that deployment authorization extends indefinitely. Continued operation requires continued justification — periodic, documented, and governed. A system that has not been recently justified has not been recently governed.

This framework does not eliminate lifecycle risk. It prevents lifecycle risk from becoming institutional failure.

Doctrine Closing

AI systems are deployed with defined beginnings and undefined endings. The asymmetry is structural. Deployment requires authorization. Continuation requires nothing. Termination requires criteria, authority, and governance mechanisms that most organizations have never defined.

A system that cannot be stopped is a system that cannot be governed. Governance requires the capacity to intervene at every lifecycle stage — including the capacity to end.

AI lifecycle governance is not a tooling problem. It is a governance architecture problem. Governance does not make systems permanent. It makes continuation a governed decision and termination a structural capability.