Why Most AI Data Protection Strategies Fail at the Decision Layer

Most AI data protection strategies do not fail because encryption is weak. They fail because decision authority is undefined.

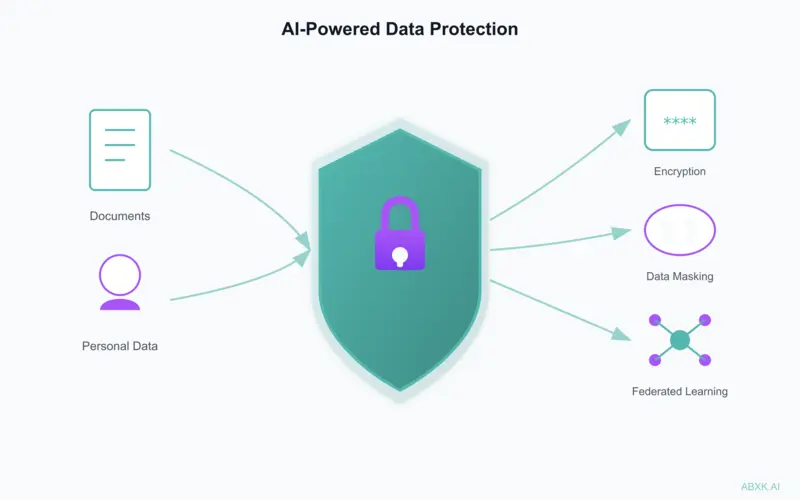

Organizations deploy AI controls — masking, access management, anonymization — yet exposure persists.

The weakness is rarely technical. It is architectural.

The Structural Misunderstanding

AI data protection is frequently treated as a tooling problem:

- Add encryption

- Add data masking

- Add access controls

- Enable privacy settings

These measures are necessary. They are not sufficient.

The primary failure occurs before deployment: organizations do not define what data may enter AI systems, under what authority, with what review process, or under what rollback criteria.

Where Exposure Begins

In production environments, exposure commonly emerges through:

Undefined Data Boundaries

Employees paste internal material into external AI systems without classification review.

Delegated Judgment

Teams rely on default platform settings instead of explicit organizational policy.

Uncontrolled Iteration

AI tools are integrated into workflows before risk tolerance is defined.

Hybrid Workflows

Outputs generated from sensitive inputs are redistributed without traceability.

In each case, the technical system functions as designed. The governance layer does not.

Technical Controls Are Not Governance

Technologies such as data masking, differential privacy, federated learning, homomorphic encryption, and synthetic data generation address specific risk surfaces.

They do not define:

- Who approves data entry

- Who monitors exposure drift

- Who audits model retraining behavior

- Who has authority to suspend usage

The Illusion of Compliance

Many organizations equate regulatory alignment with operational control.

Compliance artifacts do not equal operational discipline. Documentation exists. Policies are written. Risk assessments are filed.

Yet employees still:

- Upload confidential documents into public AI interfaces

- Store prompts containing personal data in shared logs

- Use AI-generated output derived from restricted material

Compliance frameworks do not enforce decision discipline. Only operational structure does.

The Critical Layer: Decision Authority

Effective AI data protection requires explicit answers to five questions:

- Who defines acceptable data categories for AI use?

- Who reviews high-risk data inputs?

- Who monitors boundary violations?

- Who evaluates vendor model training policies?

- Who has authority to suspend system use?

If these questions do not have named owners, exposure is only a matter of time.

Advanced Privacy Techniques — In Context

Differential privacy reduces statistical leakage risk. Federated learning limits centralized exposure. Homomorphic encryption protects computation in transit. Synthetic data reduces dependency on real records.

All are valuable. None compensate for:

- Undefined escalation paths

- Absent data classification

- Poor access governance

- No rollback criteria

Technology can reduce risk magnitude.

It cannot correct structural negligence.

Common Failure Patterns

Across AI deployments, recurring patterns appear:

- Data classification policies exist but are not enforced at input level

- AI tools are introduced before risk ownership is assigned

- Security teams review after integration, not before

- Output validation is assumed rather than structured

- Default vendor retention settings remain unchanged

These are governance failures — not cryptographic failures.

Operational Implications

If deploying AI in environments handling sensitive data:

Define explicit data boundary policies before rollout. Implement role-based AI access tiers. Log AI interactions in auditable systems. Review vendor data retention and training policies. Establish stop criteria for misuse events.

Privacy architecture must be defined before scale.

AI data protection rarely fails at the firewall. It fails at the decision layer.

Encryption, masking, and privacy-preserving computation reduce risk surface. Governance determines whether that surface expands.

In production environments, governance architecture must precede technical protection.

Related: What Text Detection Confidence Actually Means · Why Deepfake Detection Confidence Is Structurally Fragile