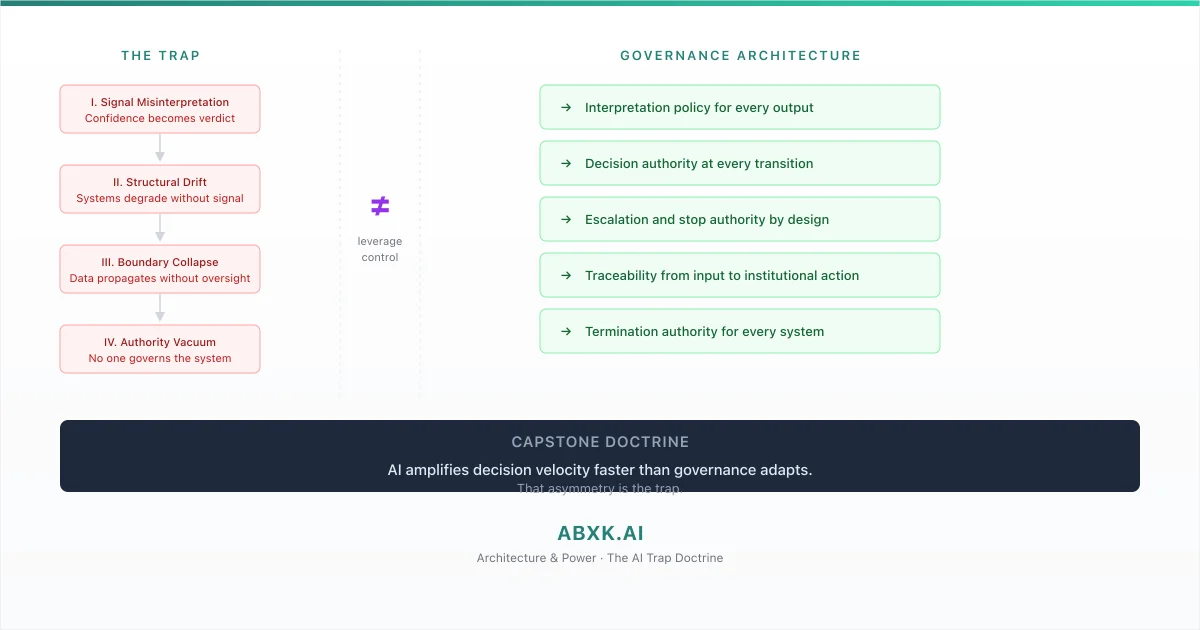

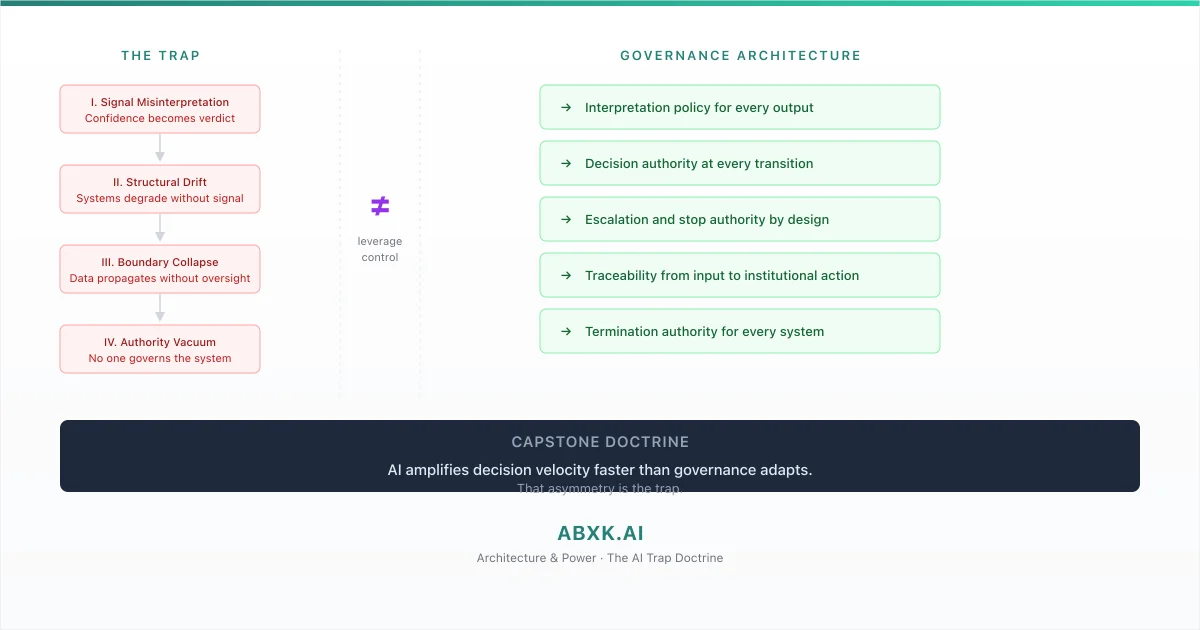

The AI Trap: When Technical Leverage Outpaces Structural Control

AI systems do not fail because the technology is inadequate. They fail because organizations deploy technical capabilities that exceed their structural capacity …

Architecture frameworks for governing and securing AI systems in production environments.

Built for engineers, architects, and technical leaders responsible for systems operating in production environments.

ABXK architecture frameworks analyze how complex systems interact with data flows, security boundaries, and decision authority in production environments.

Defines how decisions propagate through AI systems, including escalation paths, approval layers, and control points.

Defines who can approve, override, escalate, or stop automated decisions.

Defines data boundaries, automation limits, and human override mechanisms across interconnected systems.

Identifies structural risks introduced by AI integration, including scale effects, model drift, and fragmented accountability.

Analyzes how AI systems expand attack surfaces across data pipelines, enterprise infrastructure, and integration boundaries.

The goal is not to limit AI, but to ensure it remains governed as it scales.

AI systems amplify decisions and data flows across organizations. When governance is unclear or system boundaries are poorly defined, structural risk emerges:

Responsibility diffuses across teams and systems.

Systems expand beyond original intent.

Evaluation criteria erode under pressure.

Rollback and control become difficult.

In production environments, governance must be deliberate.

Structure prevents long-term risk exposure.

ABXK publishes architecture doctrine explaining the frameworks required to govern and secure AI systems in production environments.

Applied AI Governance Doctrine

An architecture framework for governing AI systems in production environments.

Weak governance scales risk faster than model errors.

Covers:

Built for leaders accountable for AI initiatives in production.

The AI TrapApplied AI Security Architecture

An architecture framework for securing AI systems in production environments.

AI systems fail at their boundaries before they fail at their models.

Covers:

Built for security teams and AI architects working with deployed systems.

AI SecurityBriefings are public excerpts of the architecture doctrine — written for teams operating AI systems under real accountability.

Each briefing addresses structural weaknesses observed in real deployments.

AI systems do not fail because the technology is inadequate. They fail because organizations deploy technical capabilities that exceed their structural capacity …

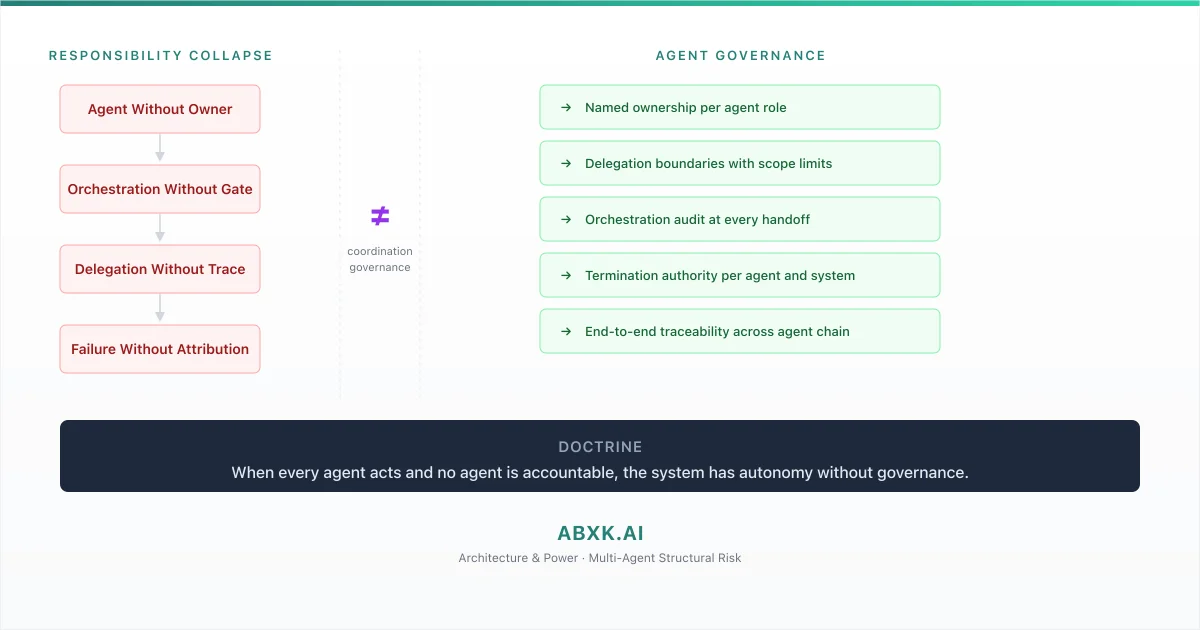

Multi-agent AI systems represent an architectural shift in how organizations deploy automated decision-making. Instead of a single model producing a single …

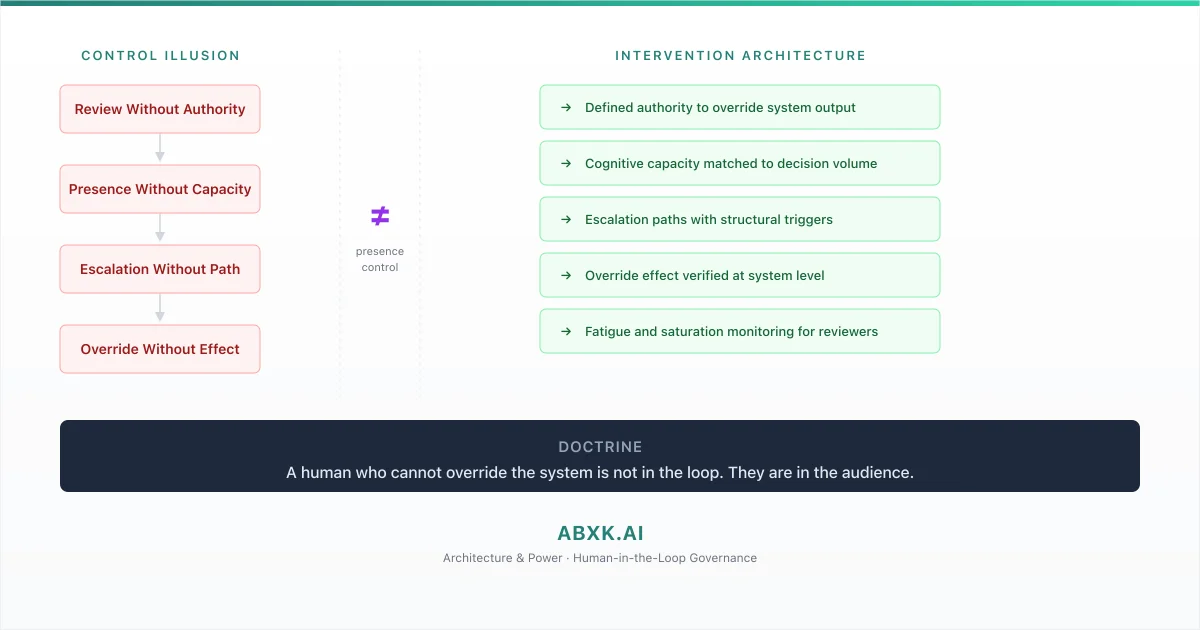

Organizations deploy AI systems with human reviewers positioned at critical decision points. A compliance analyst reviews AI-generated risk assessments. A …

ABXK.AI develops architecture frameworks and doctrine for governing and securing AI systems in production environments.

The work examines how AI systems interact with data flows, security boundaries, and decision authority at scale.

AI creates leverage.

Data expands exposure.

Security defines boundaries.

Governance assigns control.

If you are deploying AI systems in production, governance is not optional.